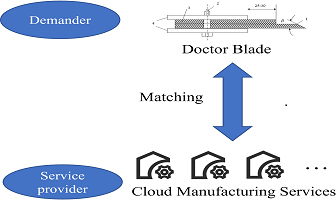

A service matching method for the cloud manufacturing of paper gravure printing machine doctor blades based on improved K-means clustering is proposed. This approach is aimed at the problem of poor accuracy of both service clustering and supply and demand matching in cloud-based doctor blade manufacturing for paper gravure printing machines. First, based on the improved K-means clustering algorithm, doctor blade cloud manufacturing services are clustered to form a set of services with high similarity within groups and low similarity between groups. Second, the extension theory is used to establish a correlation function to select the doctor blade cloud manufacturing service set with the highest correlation degree with processing demand to form a candidate service set. Finally, the analytic hierarchy process and grey relational analysis are used to select the best cloud manufacturing service based on the subjective demand preference of users to achieve the matching purpose. The experimental results demonstrate that the accuracy of this method in solving the manufacturing service problem of gravure printing machine doctor blades can exceed 90% in approximately 30 min.

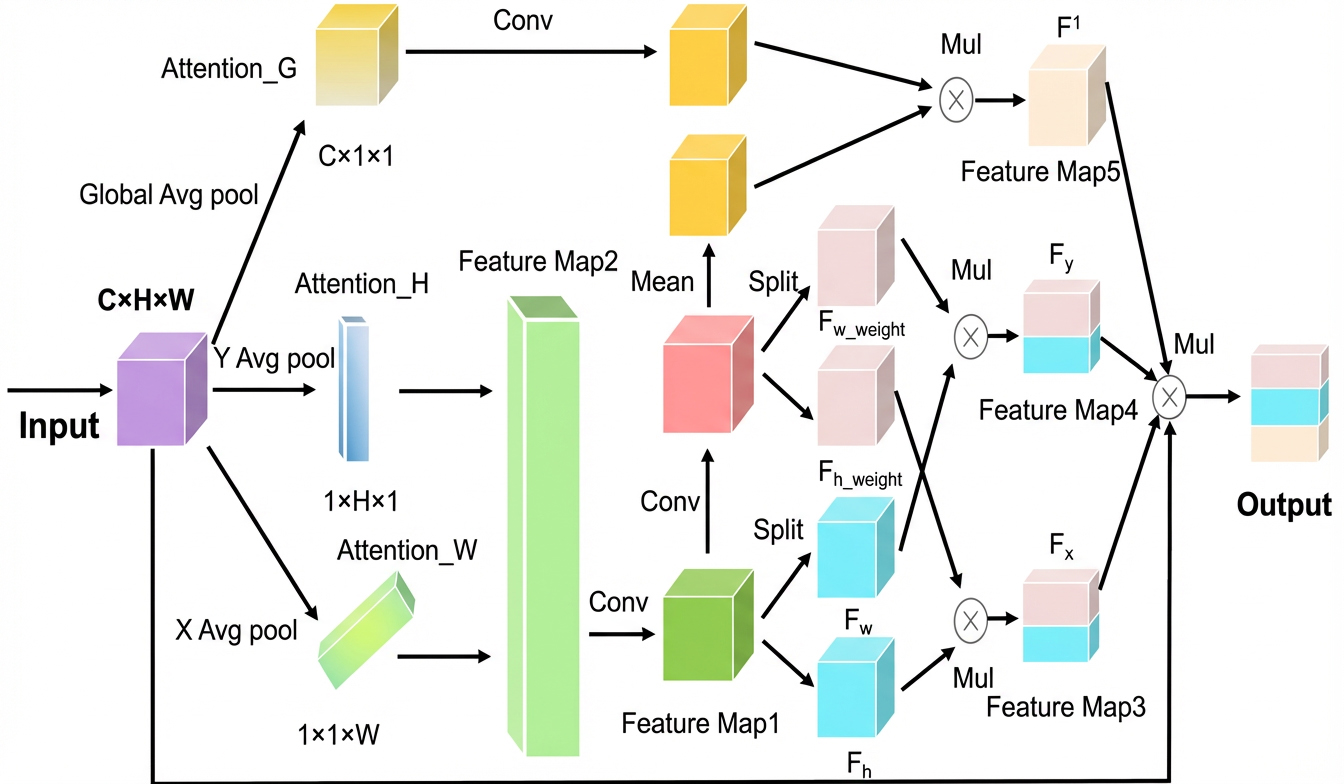

With the rapid development of logistics automation and the digital transformation of the home appliance industry, damage to heavy appliance packaging cartons during storage and transportation has become increasingly frequent, adversely affecting product image and delivery quality. Common surface defects such as scratches, holes, and wet stains can easily lead to disputes and economic losses. Therefore, a highly efficient, automated, and terminal-deployable intelligent detection algorithm is urgently required to achieve accurate identification and recording of packaging damages. To address the limitations of YOLOv8n in carton surface damage detection—specifically, its constrained accuracy and the frequent occurrence of missed and false detections—the authors propose an improved object detection algorithm, YOLOv8-PD (Packaging Damage). The proposed model enhances detection performance while maintaining high efficiency through three key optimizations: introducing a large-kernel receptive field attention module (SPPF_LSKA) in the backbone to improve global context modeling; adopting the Wise-IoU loss function to refine bounding box regression accuracy; and incorporating a multi-path coordinate attention (MPCA) mechanism to strengthen key region perception. Experiments conducted on a self-constructed dataset containing three categories—scratches, holes, and wet stains—demonstrate that YOLOv8-PD achieves improvements of 1.4%, 0.9%, and 1.4% in mAP@0.5, Precision, and Recall, respectively, compared with the baseline YOLOv8n. These results validate the proposed method’s superior accuracy and real-time performance in industrial application scenarios.

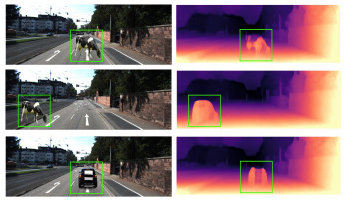

Monocular depth estimation (MDE) is a widely used technique in autonomous driving and 3D reconstruction. However, inconsistent and fragmented depth outputs can significantly undermine the reliability of MDE applications in practice. To address this issue, the authors introduce MonoHybrid, a novel self-supervised hybrid network that effectively integrates Transformer and dilated convolutional architectures. This design enables the extraction of both global and local features, enhancing the receptive field and ensuring robust and continuous depth estimation. Additionally, the authors present a new Feature Fusion Module that fuses convolutional and Transformer features, resulting in improved depth estimation performance. Through comprehensive experiments, the proposed network demonstrates notable accuracy and generalization compared to other advanced methods in the field.

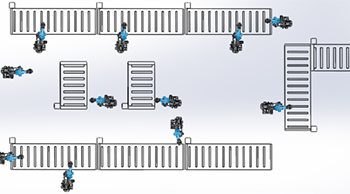

This paper focuses on the flexible packaging line for large length-to-diameter ratio heavy cylindrical products. It systematically analyzes the key bottleneck issues and proposes an optimized production line solution based on modular design. Based on the modular concept, the packaging process is decomposed into the loading module, inner packing processing module, packing module, sealing module, and stacking module. The functions of each module are clarified, and the connection sequence is optimized to achieve the compactness of the process flow and the efficient coordination of equipment. For the posture control, quality positioning, and safety protection requirements of single-root high-density materials during the packaging process, an “L-shaped + straight-line” layout scheme and dynamic scheduling strategy are proposed. A discrete-event model is constructed using the FlexSim simulation software. Through parameter calibration based on actual production data from 2022–2023, the effectiveness of the optimization scheme in improving equipment utilization and reducing buffer waiting time is verified, providing technical reference for the design of intelligent packaging lines for similar high-value and fragile materials. The proposed method and simulation framework are applicable to the optimization of production lines for imaging equipment and related materials.

Unsupervised visible–infrared person re-identification (USVI-ReID) is a very important and challenging task in machine vision. The key challenge of USVI-ReID is to effectively mine weak class-wise supervision and establish cross-modal correspondences without using any manual annotations. In this paper, the authors propose a soft prototype contrastive learning and instance discrimination method for USVI-ReID. Specifically, soft prototype contrastive learning selects the nearest neighbors with high similarity to the soft prototypes to mine accurate information and guide the model to learn more discriminative features. On this basis, a soft weighting strategy is used to quantitatively measure the relevance of the selected soft prototypes relative to the current centroid prototype, thus further eliminating the interference of the wrong prototype in the model training. To overcome the problems of image noise and complex backgrounds in visible and infrared images, instance discriminative learning is first integrated into USVI-ReID to explore the potential similarity relationship between instances from the bottom up and learn discriminative representations. Finally, the authors propose a progressive training strategy, which enables the model to learn the similarities between instances in the early stage of training and gradually shift its attention to more discriminative categories in the later stage. Extensive experiments are conducted on two public datasets, and quantitative results prove the effectiveness of the proposed method.

Traffic flow forecasting faces substantial challenges arising from intertwined heterogeneous temporal dynamics and complex spatial dependencies. A key difficulty lies in balancing long-term stable trends with short-term abrupt disturbances. To address these challenges, a framework named the Spatiotemporal Trend–Event Decoupling Mamba Graph Network (STEDMGN) is proposed. First, a temporal signal separation layer is constructed using a multi-scale decomposition and reconstruction mechanism to divide the raw sequence into trend and event components. This design enables dynamic pattern decoupling across multiple scales. Subsequently, a dual-frequency spatiotemporal encoder is introduced. The trend branch integrates multi-head attention with a Mamba-based state space layer to capture cross-period long-term dependencies, whereas the event branch employs causal convolution to model short-term abrupt disturbances. In the spatial dimension, trend-oriented and event-oriented graph convolutional networks are incorporated. These networks combine static priors, adaptive adjacency, and feature-driven dynamic graph structures to enhance the representation of both stable topologies and time-varying propagation. Finally, a fusion-gated decoder employs gated units and a query-driven fusion strategy to integrate the two feature types. A subsequent regression layer then generates multi-step forecasts. Extensive experiments on four public PeMS datasets demonstrate that the STEDMGN substantially outperforms state-of-the-art methods. The results provide an accurate and scalable solution for large-scale urban traffic flow forecasting.

Color constancy algorithms play a crucial role in computer vision, and their performance needs to be accurately evaluated. However, recent years have seen scant systematic research on the correlation between human visual perception and objective distance measures for quantifying the performance of such algorithms. In this study, therefore, the authors systematically assessed the performance of 34 existing distance measures by psychophysical studies. Six classical color constancy algorithms and two recent algorithms were adopted to process over 110 images within 4 categories (Indoor, Human, Street, and Nature), and the influence of color space on the performance of distance measures was explored. Visual assessments obtained from 48 subjects were used to analyze the consistency between predictions of distance measures and human visual responses. It was found that the two most commonly used distance measures, the recovery angle error and the reproduction angle error in normalized RGB color space, exhibited high correlation with visual judgments, producing correlation coefficients of approximately 0.86. Meanwhile, significant performance variations among distance measures across different color spaces were also observed. Distance measures in uniform color spaces exhibited excellent consistency with human perception, yielding correlation coefficients of approximately 0.88. In addition, it was found that specific scenes also influenced the accuracy of distance measures. Our study highlights the importance of selecting appropriate color spaces for evaluating color constancy algorithms and offers more insights for the optimization of distance measures in the future.

Owing to its ability to enable precise perception of dynamic and complex environments, point cloud semantic segmentation has become a critical task for autonomously driven vehicles in recent years. However, in complex, dynamic scenes, cumulative errors pose significant challenges for existing semantic segmentation methods, limiting their accuracy and efficiency, particularly in safety-critical applications. To address these issues, this paper introduces a novel framework that balances accuracy and computational efficiency by leveraging temporal alignment. The framework effectively captures inter-frame correlations, enhances local detail information, reduces error accumulation, and maintains detailed scene features. Furthermore, by integrating LiDAR and camera data through multi-modal fusion, the framework provides complementary perspectives, significantly improving segmentation performance and robustness in dynamic environments. This method achieves competitive performance on the benchmark SemanticKITTI and nuScenes datasets, demonstrating its capability to detect occluded objects and ensure reliable perception in safety-critical scenarios. The proposed framework offers a promising solution for enhancing the robustness and reliability of autonomous driving systems in complex environments.

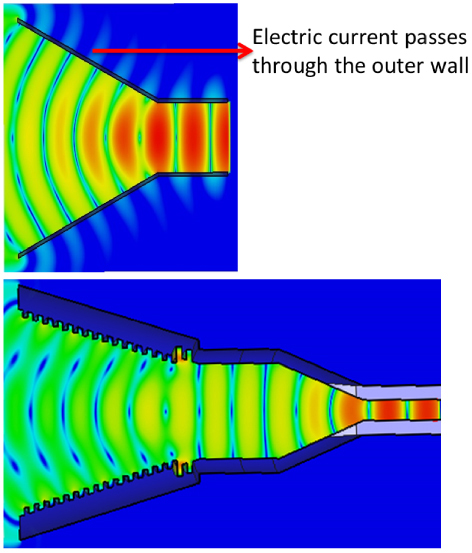

This research develops a novel manufacturing approach for millimeter-wave feedhorns, utilizing additive manufacturing combined with electroless metallization. A corrugated horn antenna operating across the K-band spectrum was engineered and produced using polymer-based 3D printing, followed by internal surface silver deposition. This methodology achieved complex internal geometry consolidation in a single process, yielding a structure with merely 17% the mass of comparable steel counterparts. Electrical characterization demonstrated reflection coefficients predominantly exceeding −20 dB magnitude across 18.0–27.0 GHz alongside the attained gain values surpassing 14 dB within 18.0–24.0 GHz. The measured far-field radiation characteristics showed excellent correlation with computational electromagnetic models. The demonstrated technique presents transformative potential for the mass-efficient production of high-frequency components in next-generation small satellite constellations and compact radar platforms.

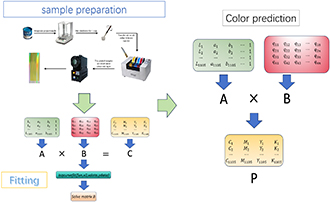

This research develops mathematical models of C, M, Y, K and CIE L∗a∗b∗ chromaticity values to minimize the errors between the predicted ratio and the actual ratio of process colors (cyan, magenta, yellow, black) on holographic paper by measuring and analyzing the data after mixing the basic inks according to proportion. It obtains the least squares estimation points by performing the multiple nonlinear regression analysis method in MATLAB. Moreover, by replacing the values of CIE L∗a∗b∗ chromaticity, the regression significance of the mathematical model is verified and the corresponding basic ink mass ratio is obtained. The results reveal that the RMSE, MAE, and R2 values of spectral prediction models, which are established by multiple nonlinear regression analysis, show small errors between the predicted and the actual outcomes.