Margin-based Face Recognition (FR) has achieved remarkable performance by learning discriminative feature representations that ensure high intra-class compactness and inter-class separability. While most state-of-the-art methods focus on developing margin-based loss functions, improving model generalization performance is equally critical, especially under open-set conditions where test identities are absent from training data. Recent developments in learning algorithms have highlighted the sharpness of the loss surface as a key factor in reducing the generalization gap. Building on this, Sharpness-Aware Minimization (SAM) introduced a weight perturbation step to enhance generalization performance, with Adaptive Adversarial Cross-Entropy (AACE) further refining SAM by modifying the perturbation step. Inspired by those researches, we propose FlatFace, a novel training framework for face recognition that adopts weight perturbation into the training process. FlatFace consists of two key steps: the perturbation step, which perturbs model parameters in both the feature extractor and class weights toward the worst-case scenario, and the weight updating step, which uses the loss gradient at the perturbed feature extractor and class weights to update the parameters. By guiding the model toward flatter minima, Flat-Face improves generalization performance and accuracy, particularly for open-set face recognition tasks. Empirical experiments confirm its effectiveness, demonstrating reduced generalization gaps and enhanced overall performance.

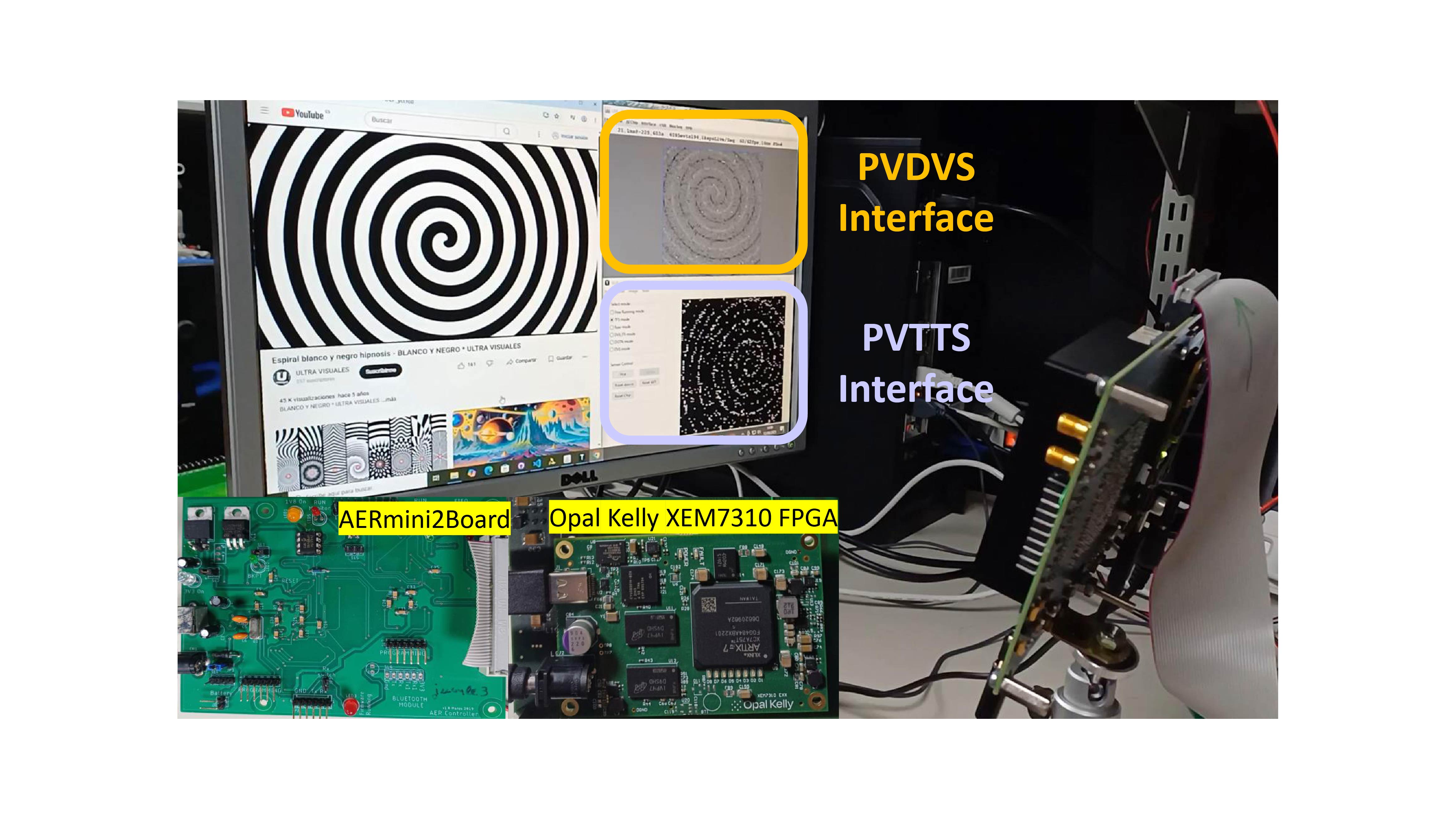

Asynchronous Time-Based Image Sensors (ATIS) jointly perform event-driven temporal contrast detection and local exposure measurement, reducing throughput by reporting only relevant information with high temporal resolution. We introduce PVATIS, a new pixel front-end that replaces the conventional pair of reverse-biased photodiodes plus a logarithmic receptor with a single diode operated in photovoltaic mode. In open-circuit, this diode simultaneously serves as the photodetector and provides logarithmic compression in a self-biased configuration. The approach directly tackles pixel-level constraints, such as pixel pitch, noise, and energy, while trading off bandwidth due to increased integrated capacitance. PVATIS is therefore a strong candidate for high-resolution, HDR, low-noise, and energy-efficient operation, particularly suitable for 3D-stacked implementations and moderate-speed imaging.

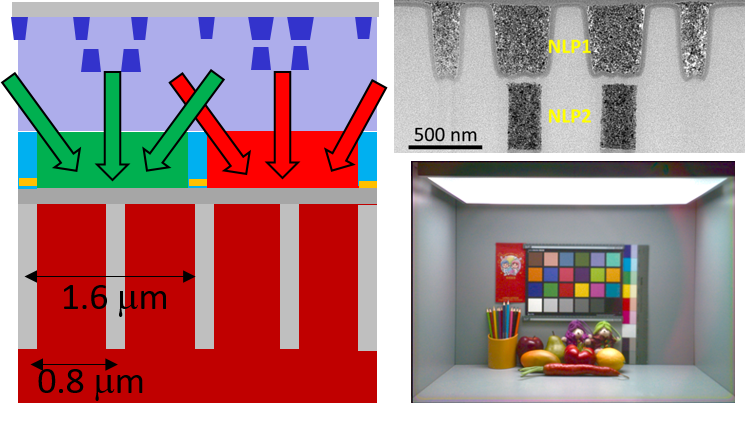

We demonstrated a die-level CMOS image sensor featuring 1.6 μm pixels and integrated nano-light pillars. This design achieved a 1.5 dB improvement in the signal-to-noise ratio (SNR). With an optimal pillar arrangement, the efficiency ratio between the die center and ide edge is comparable to conventional image sensors, while maintaining an acceptable Gr-Gb signal difference.