This manuscript serves as an introduction to the conference proceedings for the 34th annual Stereoscopic Displays and Applications conference and also provides an overview of the conference.

The Stereoscopic Displays and Applications Conference (SD&A) focuses on developments covering the entire stereoscopic 3D imaging pipeline from capture, processing, and display to perception. The conference brings together practitioners and researchers from industry and academia to facilitate an exchange of current information on stereoscopic imaging topics. The highly popular conference demonstration session provides authors with a perfect additional opportunity to showcase their work. The long-running SD&A 3D Theater Session provides conference attendees with a wonderful opportunity to see how 3D content is being created and exhibited around the world. Publishing your work at SD&A offers excellent exposure—across all publication outlets, SD&A has the highest proportion of papers in the top 100 cited papers in the stereoscopic imaging field (Google Scholar, May 2013).

Estimates of stereo-blindness, the inability to see in 3D using stereopsis, often sit in the 5-10% range. At the Curtin University HIVE visualization facility, we regularly show stereoscopic content. During those demonstrations we invariably show a test random dot stereogram and it’s been our casual observation that the incidence of stereo-blindness amongst visits has been much lower than the 5-10% figure, perhaps as low as 2%. Our thought was that perhaps eye care has improved since the time that the original stereo-blindness studies were performed. A VR based user study was recently run in the HIVE, as part of a PhD project, with an aim to study distance perception in underwater virtual heritage experiences. Distance perception is facilitated by a range of visual cues, including stereoscopic vision, and as a result we screened participants for stereo-blindness. Using a standardized stereo test, we found approximately 5% of participants reported as stereo blind. This presentation will provide some background on stereo-blindness, and discuss issues related to measuring stereo-blindness and its likely prevalence in the general population. It will also provide an early look at the VR based user study investigating distance perception in underwater virtual heritage experiences.

Design education has special requirements on using virtual studio setups. The Zoom fatigue during the world-wide COVID pandemic let our institution to test new tools which can help improve digital design education by supporting features like idea sharing, collaborative making, serendipitous discussion and group forming. For this purpose, we tested different tools during our Master program Innovation Design Engineering and collected student feedback. We found that immersion is a key factor impacting the effectiveness of group work in distance learning. This paper presents our applications and analysis of different platforms and contributes insights on how to build a virtual studio environment for an interdisciplinary master programme in design engineering. In this work, we will focus on our studies on Gather.town, Meta Horizon Workrooms and Spatial.

This paper investigates how the angular resolution of a simulated light field display affects the viewing experience of a 3D rendered scene. The effect of three-dimensionality, helpfulness of motion parallax, and overall viewing experience are evaluated by mean of a quantitative user study. A total of 32 results are recorded and evaluated. The quality metrics show a logarithmic trend, improving with an increasing number of views. A plateau at 0.25° per view can be found in two of the three evaluated questions. Moreover, the participants preferred the viewing experience of a conventional 2D-display over the simulated light field displays with more than 0.5° per view (less than 360 views at 180° FOV). This shows, that light field displays have to have a certain minimum angular resolution to create a pleasant viewing experience.

Since 2016 the RCA Grand Challenge is an interdisciplinary event that runs each year across the entire School of Design at the Royal College of Art. Around 400 Master and PhD students with design background participate in this event, focusing on tackling key global challenges through collaboration. In 2022, the Grand Challenge was focusing on elaborating topics in the context of Ocean-driven activities. In this context, a VR workshop was conducted with around 40 students with mostly no background in using VR platforms. In the end – after a 4-week design sprint – 10 UNREAL engine-based projects were presented of which we will discuss a selection here. One of the projects managed to be among the overall winning teams of the Grand Challenge 2022.

Vergence-accommodation (VA) mismatch is a component of stereoscopic 3D remote vision system (RVS) design linked to depth misperception and visual discomfort. VA mismatch is caused by an unnatural conflict between the focal distance of the image (and thus accommodative demand) and the binocular vergence demand. A possible solution to mitigate VA mismatch is to change the accommodative demand with an optical correction, reducing the mismatch with the vergence demand. This experiment investigated the effect of low-add spectacle lenses (eyewear) on RVS performance and visual comfort. While previous research showed a positive effect of decreasing VA mismatch with the use of switchable lenses to adjust focal distance, the optical changes in this investigation were insufficient to make a difference. We conclude that the use of eyewear with a small dioptric add is not an effective solution to improve stereoscopic RVS performance or viewing comfort.

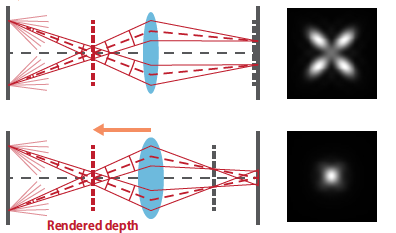

Light field (LF) displays are a promising 3D display technology to mitigate the vergence-accommodation conflict. Recently, we have proposed a simulation framework to model an LF display system. It has predicted that the in-focus optical resolution on the retina would drop as the relative depth of a rendered image to the display-specific optical reference depth grows. In this study, we examine the empirical optical resolution of a near-eye LF display prototype by capturing rendered test images and compare it to simulation results based on the previously developed computational model. We use an LF display prototype that employs a time-multiplexing technique and achieves a high angular resolution of 6-by-6 viewpoints in the eyebox. The test image is rendered at various depths ranging 0–3 diopters, and the optical resolution of the best-focus images is analyzed from images captured by a camera. Additionally, we compare the measurement results to the simulation results, discussing theoretical and practical limitations of LF displays.

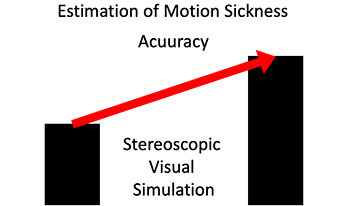

Automation of driving leads to decrease in driver agency, and there are concerns about motion sickness in automated vehicles. The automated driving agencies are closely related to virtual reality technology, which has been confirmed in relation to simulator sickness. Such motion sickness has a similar mechanism as sensory conflict. In this study, we investigated the use of deep learning for predicting motion. We conducted experiments using an actual vehicle and a stereoscopic image simulation. For each experiment, we predicted the occurrences of motion sickness by comparing the data from the stereoscopic simulation to an experiment with actual vehicles. Based on the results of the motion sickness prediction, we were able to extend the data on a stereoscopic simulation in improving the accuracy of predicting motion sickness in an actual vehicle. Through the performance of stereoscopic visual simulation, it is considered possible to utilize the data in deep learning.