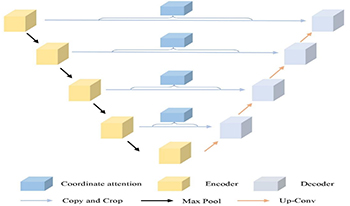

The maintenance of critical transportation infrastructure such as roads, tunnels, and bridges depends heavily on timely and accurate detection of structural damage. Among various types of surface defects, concrete cracks are the most common and dangerous. However, traditional manual inspection methods are inefficient, labor-intensive, and pose safety risks. In this paper, the authors propose UC-Net, a novel semantic segmentation network specifically designed for concrete crack detection. The UC-Net builds upon the U-Net architecture and introduces a Coordinate Attention mechanism into skip connections, enabling the model to better capture long, narrow, and spatially scattered crack features while suppressing irrelevant background noise. This design addresses two key challenges: the small spatial proportion of cracks in high-resolution images and the interference from complex textures and lighting conditions. To validate the effectiveness of this approach, the authors conducted extensive experiments on a publicly available crack dataset and real-world images. Compared with existing CNN- and attention-based networks, UC-Net achieves superior performance, with improvements in IOU (up to 91.78%) and accuracy (89.33%). The results confirm that UC-Net provides a lightweight yet accurate solution for fine-grained crack segmentation, and it can serve as a practical tool in real-world infrastructure monitoring scenarios.

Purpose: Gliomas, particularly brain tumors, pose significant challenges due to their complex pathology and life-threatening potential. The goal of this study is to introduce LU-net, a novel semantic segmentation algorithm designed to enhance the diagnosis and treatment planning of gliomas. This research seeks to address the limitations of traditional classification and detection methods by improving the accuracy and robustness of tumor boundary delineation in medical images. Methods: LU-net employs a multiscale image pyramid along with a Bayesian-inference-based multiscale probability search to capture complex tumor features. The algorithm is further strengthened by integrating a Conditional Random Field model, enabling more precise segmentation. The performance of LU-net is evaluated against existing segmentation algorithms using standard metrics such as accuracy, Intersection over Union (IoU), and Dice score. Results: The experimental results demonstrate that LU-net outperforms current segmentation algorithms in terms of both accuracy and robustness. Specifically, LU-net achieves an accuracy of 0.9953, an IoU of 0.667, and a Dice score of 0.566, effectively addressing the pathological heterogeneity and invasiveness of gliomas. These results highlight LU-net’s superior ability to delineate tumor boundaries and improve diagnostic accuracy. Conclusion: LU-net sets a new benchmark in glioma lesion detection, offering a more effective approach for brain tumor segmentation. By improving the accuracy, reliability, and interpretability of brain tumor boundary delineation, LU-net enhances diagnostic and treatment strategies, providing significant benefits to patients, clinicians, and healthcare providers. Overall, this work marks a significant contribution to the field of medical imaging and glioma diagnosis.

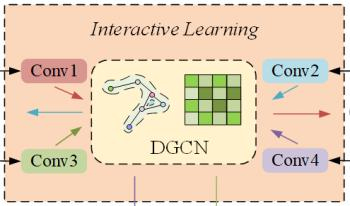

Accurate traffic flow forecasting plays a crucial role in alleviating road congestion and optimizing traffic management. Although numerous effective models have been proposed in existing research to predict future traffic flow, most models exhibit certain limitations in modeling spatiotemporal dependencies, especially in capturing multiscale spatiotemporal relationships. To address this, we propose a novel model called Spatiotemporal Augmented Interactive Learning and Temporal Attention (STAIL-TA) for traffic flow prediction, which is designed for dynamic and interactive adaptive modeling of spatiotemporal features in traffic flow data. Specifically, we first design a feature augmentation layer that enhances the interaction of time-based features. Next, we introduce an interactive dynamic graph convolutional network, which uses an interactive learning strategy to simultaneously capture spatiotemporal characteristics of traffic data. Additionally, a new dynamic graph generation method is employed to design a dynamic graph convolutional block, which is capable of capturing the spatial correlations that change dynamically within the traffic network. Finally, we construct a novel temporal attention mechanism that effectively leverages local contextual information and is specifically designed for transforming numerical sequence representations. This enables the prediction model to capture the dynamic temporal dependencies of traffic flow better, thus facilitating long-term forecasting. The experimental results show that the STAIL-TA model improves the mean absolute error and root mean squared error on the PEMS-BAY dataset by 7.75%, 3.68% and 5.59%, 2.72% in the 15-minute and 30-minute predictions, respectively, when compared to the existing optimal baseline method, MRA-BGCN.

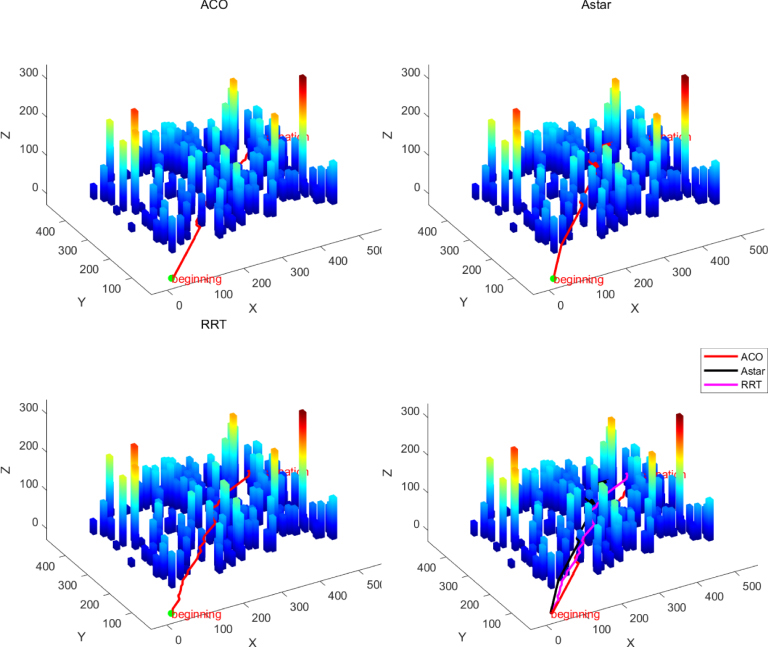

To address the issue of low accuracy in 3D modeling of images captured by unmanned aerial vehicles (UAVs), the authors propose an enhanced 3D reconstruction model for a mountain in Yuanmou by employing an improved Structure from Motion–Multiview Stereo (SFM–MVS) algorithm. In the process of converting 2D into 3D data, the key challenges lie in feature point extraction and matching. The authors introduce an algorithm for optimizing Speeded Up Robust Features (SURF) by combining the SURF descriptor operator with a fast feature point algorithm. The use of the Laplace operator to refine the extraction of weighted feature points along with integration into the robust SURF descriptor simultaneously improves both matching speed and accuracy. This solution mitigates issues related to excessive image data and the low accuracy and efficiency of 3D reconstruction models in UAV applications. Experimental results demonstrate that the proposed method extracts more feature points, increases matching speed, and significantly enhances accuracy compared to both the original SURF and traditional Scale-Invariant Feature Transform algorithms. When compared to the unoptimized SFM–MVS algorithm, the accuracy of the optimized 3D reconstruction using the SURF-based algorithm improves by approximately 44.68%, with a 30% increase in processing speed. Additionally, to evaluate UAV path planning performance on complex terrain, the authors first employ the optimized SURF-based 3D reconstruction method to precisely reconstruct the terrain map of the target area. This method improves both the accuracy and efficiency of 3D terrain reconstruction, providing high-precision data for subsequent path planning algorithm performance testing. Subsequently, three classical path planning algorithms—Ant Colony Optimization, A*, and Rapidly exploring Random Tree—are selected for comparative analysis of UAV path planning capabilities on complex terrain.

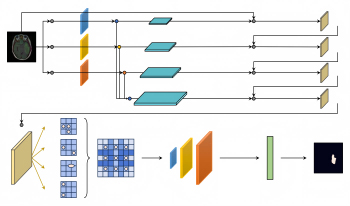

Accurate segmentation of brain tumors is essential in the planning of neurosurgical treatments as it can significantly enhance their effectiveness. In this paper, the authors propose a modified Residual U-shaped network (ResUnet) based on multimodal fusion and a Generative Adversarial Network for multimodal brain tumor Magnetic Resonance Imaging segmentation. First, they propose a three-path structure for the encoding stage to address the issue of inadequate utilization of multimodal features, which leads to suboptimal segmentation results. The structure comprises three components: the T1 path, the T1ce path, and the fusion path combining Flair and T2 modalities. They then utilize average pooling to integrate the global information from the T1 path into both the T1ce and the fusion path, enhancing the feature fusion across different modalities and strengthening the robustness of the network. Subsequently, the features from the T1ce path and the fusion path are connected to the decoding stage through skip connections to enhance the utilization of model features and improve segmentation accuracy. Finally, the authors leverage the Deep Convolutional Generative Adversarial Network (DCGAN) to further enhance the accuracy of the network. They improve the loss function of the DCGAN by introducing an adaptive coefficient, which reduces the loss value in the early stages of model training and increases it in the later stages. Experimental results demonstrate that the proposed method effectively improves segmentation accuracy compared to related methods.

Selecting lattice networks to achieve specific tailored material properties has traditionally been a daunting task. Unit cell selection is a “heuristic-based” methodology, which is time- consuming and rarely leads to an optimal solution. A new approach to metamaterial design methodology encompassing quantitative unit cell selection and optimization that is based on baseline geometry is presented. To achieve this new design roadmap, a real-world case is used for utilizing metamaterials to design an optical bench from Aluminum 6061 T6 equivalent (Al6061 RAM2), achieving 2-micron surface deformation and a 10% mass penalty relative to Beryllium I-220H of diametrical surface-level deformation. The primary goal is to design specific beryllium-like mechanical properties without the added manufacturing challenges, lead time, and cost of Beryllium I-220H. Quantitative lattice selection methodology is considered in which a lattice network design is developed to reduce the structure weight while still maintaining overall resistance to deformation when a thermal load is applied to the optical bench. The result is a quantitative design process that can produce metamaterial geometry tailored to specific material properties in less than 100 days including manufacturing.

The determined drive toward miniaturization in electronics has led to increasingly complex printed circuit board (PCB) designs, posing significant challenges for object detection and inspection processes. This research introduces an innovative method to improve the detection of small objects on PCBs by integrating advanced multiscale layer fusion techniques with the YOLOv8 object detection framework. Leveraging the capabilities of YOLOv8, the proposed methodology addresses the limitations imposed by low-resolution imaging systems, thereby enhancing the reliability and accuracy of small-object detection. The effectiveness of the proposed approach is assessed through experimentation and validation, showcasing its ability to detect small components and defects on PCBs. The results indicate superior performance compared to existing methods, with a mean Average Precision (mAP@0.5) of 99.30% and an inference speed of 161 frames per second (FPS). This high FPS and high accuracy facilitate real-time processing, making the model suitable for deployment in time-sensitive industrial environments.

In this paper, the authors propose a new method for the denoising of magnetic resonance imaging (MRI) corrupted by noise with spatially varying noise levels. The dual-tree complex wavelet transform (DTCWT) is selected instead of the scalar wavelet transform because the DTCWT has the shift-invariant property, which is very useful in image denoising. The noise levels are estimated locally from MRI images by the DTCWT, which can be computed as a 2D matrix from the finest high-frequency subband. The k-means is used to segment the image into different regions with similar noise levels, and then denoising is performed for every region with block matching and 3D filtering (BM3D). The denoised regions are combined together and the boundary is smoothed so that better denoised image can be obtained. Experiments demonstrate that this new method outperforms several existing image denoising methods such as wiener2 filter, wavelet denoising, bivariate wavelet shrinkage, SURELET, non-local means, and BM3D even if the noise levels vary spatially.

In this article, the authors introduce a versatile frame- work and rendering techniques for the construction of cost-effective and easy-to-assemble multi-view 360∘ displays. The proposed methodology allows for the creation of displays featuring high-resolution and full-color images across a variable range of viewing angles. This framework extends the existing technology, which utilizes a parallax approach using rotating screens. A variety of display configurations achievable through the proposed framework are explored, including a multi-display strategy to mitigate flicker, as well as a flat design aimed at delivering a see-through experience. Additionally, the rendering workflow used to generate multi-view 360∘ content is introduced. Finally, results are presented in the form of photographs captured from varying angles along with an analysis of the displayed content derived from two constructed prototypes: the first exemplifies a cylindrical design with dual displays while the second showcases a see-through flat-based design.