A closed‑loop color feedback algorithm that leverages post‑ISP statistics to improve camera color quality is presented. Unlike traditional approaches, which evaluate white balance and color early in the pipeline and tune individual modules in isolation, the proposed method assesses color near the end of the ISP pipeline, compares it against target perceptual colors, and feeds the resulting deviations back to upstream processing blocks. This enables dynamic adjustment of AWB and color‑related parameters to achieve desired perceptual color outcomes. The framework addresses key limitations of conventional color processing, including (1) evaluating AWB in the raw domain where perceived color cannot be reliably assessed, (2) the inability of fixed color‑tuning parameters to compensate for deviations introduced by other ISP blocks, and (3) the lack of coordinated color evaluation across modules. We further demonstrate an application of this framework for skin‑tone improvement. The system takes face regions, filters non‑skin pixels, computes representative skin color statistics, compares them with target skin colors, and derives adjustment parameters that update color tunings for the current or subsequent frame. This example illustrates the flexibility and effectiveness of the proposed closed‑loop approach for perceptually guided color enhancement or accurate color reproduction.

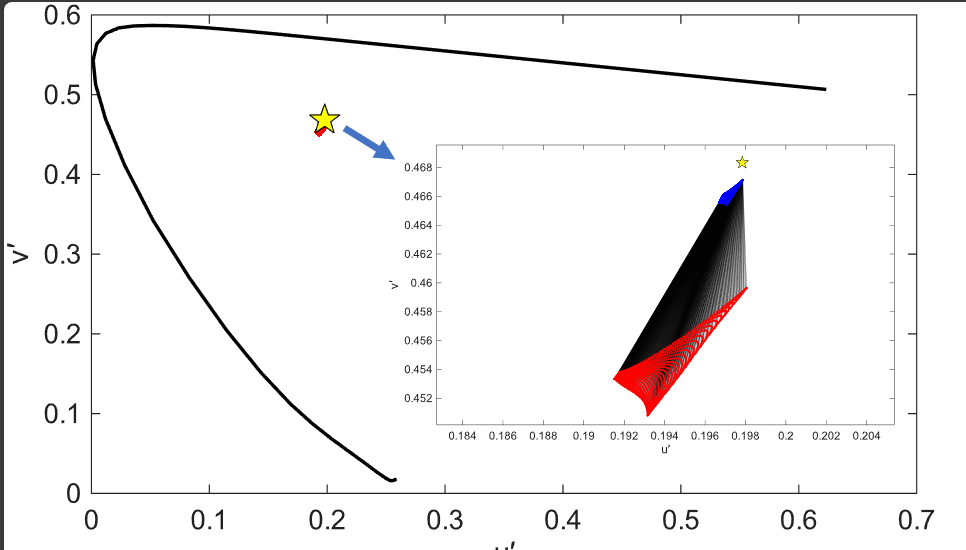

This study builds upon our previous work, where we analyzed the range of real-world colors and identified images containing colors that exceed the boundaries of legacy color gamuts such as sRGB and DCI-P3, making them difficult for traditional displays to render accurately. In our current research, we conducted a series of visual experiments to evaluate perceptual differences and viewer preferences when such images are displayed on ultra-WCG displays compared to standard-gamut displays. Our findings indicate that observers could consistently distinguish between images shown on an ultra-WCG display and the same images calibrated to sRGB. The perceptual difference between DCI-P3 and ultra-WCG was notably smaller, resulting in lower detection rates that were more contentdependent. Overall, observers showed a strong preference for the ultra-WCG display, regardless of the viewing condition or the image content.

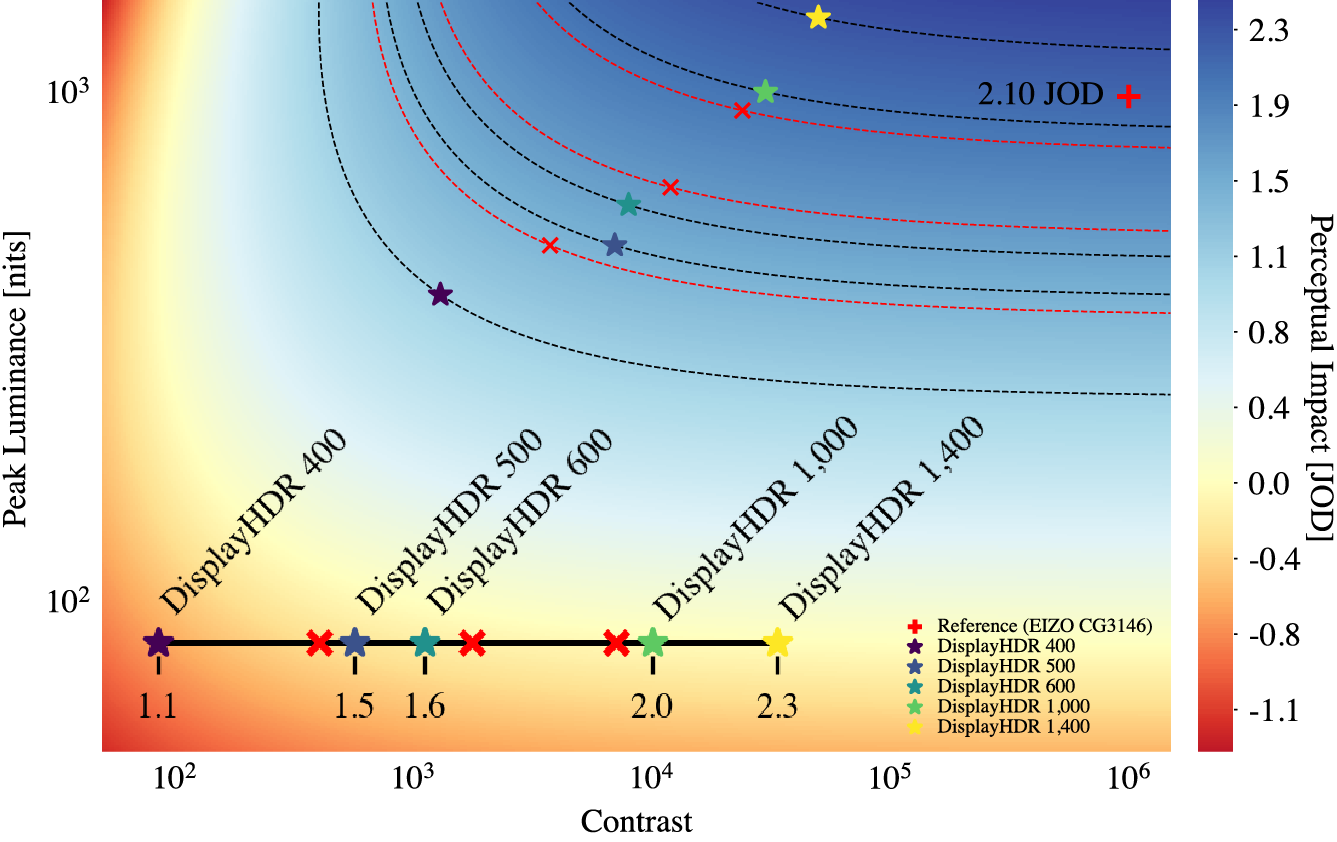

Characterization of a high dynamic range (HDR) display’s performance can be largely defined by its contrast and peak luminance. Prior work has studied this question for virtual reality (VR) using a haploscopic HDR setup, but it is not obvious if those results are transferrable to a more traditional viewing setting, such as direct view. In this work, we conducted a study to measure user preference for different contrast and peak luminance parameters in this scenario, and develop a perceptual just-objectionable-difference (JOD) scale to quantify preference scores. This is accomplished by studying contrast and peak luminance conditions across several orders of magnitude, shown on a professional HDR display with peak luminance of 1,000 nits and 1,000,000:1 contrast. The data is used to develop a computational model that can drive display design and future standardization of the definition of HDR, in terms of human preference.

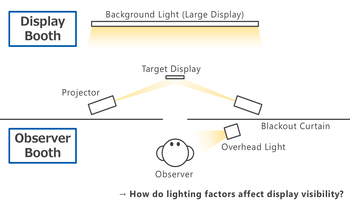

Mobile displays are used across a wide range of lighting conditions. Readability and visual comfort vary greatly depending on the surrounding light environment as well as the luminance of the display. To evaluate effects of the light environment, we developed a two-booth experimental system capable of independently manipulating three factors: the illuminance on display surface, the luminance behind the display and the ambient illuminance in the user's space. Participants viewed black text on a white screen of a smartphone under various light conditions, rating its readability, discomfort glare, and screen comfort across multiple luminance levels. The results demonstrated that all three factors affect visual perception. Especially, the illuminance on the screen had the most powerful effect on readability, while the factors interacted, offsetting each other's effects. In addition, we confirmed that such effects depend on the observer’s light-dark adaptation state. These findings indicate that visual perception of mobile displays can be determined not by individual factors, but by their complex combination.

The existing tone mapping operators (TMOs), compress either the high dynamic range (HDR) image luminance or RGB channels and assume uniform adaptation conditions, contrary to human vision that adapts colorfulness under varying adaptation luminance conditions. One of the challenges in tone mapping is maintaining perceptual consistency of both lightness and colorfulness under varying adaptation luminance. Unlike traditional approaches, this work proposes CIECAM16 lightness based, spatially adaptive tone mapping and allows colorfulness according to local adaptation luminance. Furthermore, it uses spatial white point instead of a global one aligning the human perceptual phenomenon. The paper further analyzes the performance of the proposed TMO across various spatial conditions, demonstrating that it preserves local contrast and maintains detail in both highlight and shadow regions while adaptively regulating colorfulness under various adaptation conditions. Hence, this adaptive approach for HDR to standard dynamic range (SDR) mapping offers perceptually faithful representation.

Image quality assessment has been a longstanding area of research, with significant efforts dedicated to developing objective metrics that can reliably predict perceived image quality. While numerous image quality metrics have been proposed, ranging from traditional handcrafted approaches to modern machine learning-based models, their evaluation typically relies on statistical comparisons with subjective human ratings where correlation is the primary measure of performance. In this study, we explore an additional dimension in image quality evaluation: the impact of image semantic complexity on metric performance. Specifically, we hypothesize that the number of distinct semantic categories within an image influences the accuracy of image quality metrics. We evaluate 8 state-of-the-art image quality metrics across 2 benchmark datasets, analyzing performance variations with respect to image semantic complexity (category count), based on two vision-language models. Our findings reveal that for some image quality metrics there is an impact of semantic complexity and outliers. This suggests that content-aware evaluation could be crucial for future image quality research.

In camera product development, where the goal is to achieve the best possible image quality and user experience, it is necessary to use both objective and subjective test methods. Both methods have their own advantages and disadvantages. The goal of this study is to bring these methods closer together and help the user of objective tests understand the meaning of the test result for the end user. Objective image quality measures are fast and efficient. They form the basis for, for example, camera product comparisons daily basis. The comparison of two camera products is completed quickly and the result is reliable and repeatable. Based on the results provided by the measure, it is possible to rank any camera products easily. However, how big is the noticeable difference between two products for a user if such an objective measure is used? When is the difference significant? Or does the user notice the measured difference at all? In this study, we wanted to get answers to these questions for the acutance measure which is used daily basis. We conducted numerous subjective tests in a controlled lab with carefully chosen stimuli. To include the effect of image content in the study, we used both an image with a lot of detail and a test image with a lot of flat areas and little detail as test samples. Based on these subjective results, we calculated the corresponding Just Noticeable Difference (JND) values for our acutance measure. Results were slightly different to image content with flat areas versus image content with a lot of detail. This study presents methods and results for finding JND values for an objective acutance measure that can be more broadly generalized to all objective acutance measures and, in terms of the method, to all objective measures.