Urban data is increasingly available to the public, but making it accessible and useful for non-expert users remains challenging. Interactive visualization offers a powerful means to explore such data, particularly for tasks like comparing districts within one or between two cities, a common scenario for students relocating, tourists planning a trip, or citizens considering moving. Due to the varied interests and priorities of individuals, developing a universally applicable solution is demanding. In this work, we propose an approach that enables visual, weighted comparison of city attributes. We first abstract analysis tasks and derive corresponding design requirements, and then present a coordinated multiple views system that allows users to compare different weighted attributes across districts of two cities. The system integrates linked views that support selection, weighting, ranking, and detailed exploration of urban data. We demonstrate the usefulness of our approach through a comparison of Vienna, Austria, and Berlin, Germany, and validate it with a pilot study, which received very positive feedback. Our results indicate that the proposed approach supports casual users in exploring urban data effectively while allowing flexible, personalized comparisons.

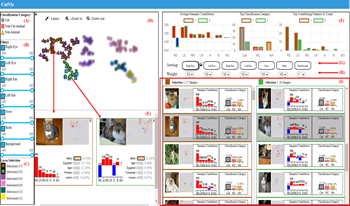

Given a picture classified as a Persian cat by an AI model, users may ask questions such as, “What are the contributions of the eyes and ears to the classification result?” or “Which features contribute the most?” While existing post-hoc XAI methods effectively explain model predictions at the pixel or patch level, they are limited in directly quantifying the contributions of human-interpretable semantic features. In this paper, we propose a visual analytics approach for feature-level interpretation of image classification results. Our contributions are twofold. First, we introduce a semantic contribution quantification method that builds upon existing pixel-level attribution techniques (e.g., Layer-wise Relevance Propagation, Grad-CAM). Specifically, we aggregate and normalize pixel-level relevance scores over predefined semantic regions (such as eyes, ears, and body) to compute comparable contribution scores for each semantic feature within an image. Second, we present an interactive visual interface that leverages these quantified semantic feature contributions to support exploration, comparison, and analysis of AI outputs across image collections. Through illustrative scenarios and expert feedback, we demonstrate that our approach provides an intuitive, scalable, and semantically meaningful means to interpret image classification explanations.

3D Gaussian Splatting (3D-GS) has recently emerged as a powerful technique for real-time, photorealistic rendering by optimizing anisotropic Gaussian primitives from view-dependent images. Unlike neural implicit methods such as NeRF, 3D-GS avoids the need for a neural network forward pass at inference, making it significantly faster while maintaining high visual fidelity. While 3D-GS has been extended to scientific visualization, prior work remains limited to single-GPU settings, restricting scalability for large scientific datasets on high-performance computing (HPC) systems. In this study, we present a distributed 3D-GS pipeline tailored for scientific data on HPC. Our approach partitions data across nodes, trains Gaussian splats in parallel using multi-nodes and multi-GPUs, and merges splats for global rendering. To eliminate artifacts, we add ghost cells at partition boundaries and apply background masks to remove irrelevant pixels. Benchmarks on the Richtmyer–Meshkov datasets (about 106.7M Gaussians) show up to 3X speedup across 8 nodes on Polaris while preserving image quality. These results demonstrate that distributed 3D-GS enables scalable visualization of large-scale scientific data and provides a foundation for future in situ applications.

Identifying key timesteps in spatio-temporal datasets is essential for shaping the story that a simulation tells. The selected timesteps act as anchors for visualization, guiding parameter choices for rendering, animation, and analysis. While many sophisticated selection methods have been proposed, we show that the field has often leaned toward unnecessary complexity. In this work, we provide a survey of existing timestep selection strategies, illustrating their limited ability to balance quality and efficiency. Building on these insights, we introduce a deliberately simple approach based on greedy local search. Starting from uniformly spaced candidates, we iteratively shift selections to minimize reconstruction error under interpolation. Despite its simplicity, this method consistently yields high-quality subsets, enabling effective parameter tuning and exploratory visualization while achieving significantly lower computational cost than more elaborate techniques. Through quantitative comparisons across datasets and error metrics, we demonstrate that this purposeful simplicity can provide a better trade-off between quality and runtime than existing, more complex alternatives.