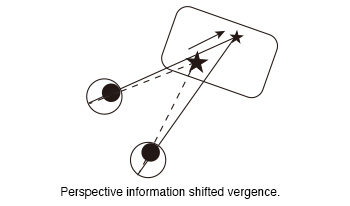

The vergence of subjects was measured while they observed 360-degree images of a virtual reality (VR) goggle. In our previous experiment, we observed a shift in vergence in response to the perspective information presented in 360-degree images when static targets were displayed within them. The aim of this study was to investigate whether a moving target that an observer was gazing at could also guide his vergence. We measured vergence when subjects viewed moving targets in 360-degree images. In the experiment, the subjects were instructed to gaze at the ball displayed in the 360-degree images while wearing the VR goggle. Two different paths were generated for the ball. One of the paths was the moving path that approached the subjects from a distance (Near path). The second path was the moving path at a distance from the subjects (Distant path). Two conditions were set regarding the moving distance (Short and Long). The moving distance of the left ball in the long distance condition was a factor of two greater than that in the short distance condition. These factors were combined to created four conditions (Near Short, Near Long, Distant Short and Distant Long). And two different movement time (5s and 10s) were designated for the movement of the ball only in the short distance conditions. The moving time of the long distance condition was always 10s. In total, six types of conditions were created. The results of the experiment demonstrated that the vergence was larger when the ball was in close proximity to the subjects than when it was at a distance. That was that the perspective information of 360-degree images shifted the subjects’ vergence. This suggests that the perspective information of the images provided observers with high-quality depth information that guided their vergence toward the target position. Furthermore, this effect was observed not only for static targets, but also for moving targets.

Color imaging has historically been treated as a phenomenon sufficiently described by three independent parameters. Recent advances in computational resources and in the understanding of the human aspects are leading to new approaches that extend the purely metrological view of color towards a perceptual approach describing the appearance of objects, documents and displays. Part of this perceptual view is the incorporation of spatial aspects, adaptive color processing based on image content, and the automation of color tasks, to name a few. This dynamic nature applies to all output modalities, including hardcopy devices, but to an even larger extent to soft-copy displays with their even larger options of dynamic processing. Spatially adaptive gamut and tone mapping, dynamic contrast, and color management continue to support the unprecedented development of display hardware covering everything from mobile displays to standard monitors, and all the way to large size screens and emerging technologies. The scope of inquiry is also broadened by the desire to match not only color, but complete appearance perceived by the user. This conference provides an opportunity to present, to interact, and to learn about the most recent developments in color imaging and material appearance researches, technologies and applications. Focus of the conference is on color basic research and testing, color image input, dynamic color image output and rendering, color image automation, emphasizing color in context and color in images, and reproduction of images across local and remote devices. The conference covers also software, media, and systems related to color and material appearance. Special attention is given to applications and requirements created by and for multidisciplinary fields involving color and/or vision.

The conference on Human Vision and Electronic Imaging explores the role of human perception and cognition in the design, analysis, and use of electronic media systems. Over the years, it has brought together researchers, technologists, and artists, from all over the world, for a rich and lively exchange of ideas. We believe that understanding the human observer is fundamental to the advancement of electronic media systems, and that advances in these systems and applications drive new research into the perception and cognition of the human observer. Every year, we introduce new topics through our Special Sessions, centered on areas driving innovation at the intersection of perception and emerging media technologies.

Research on human lightness perception has revealed important principles of how we perceive achromatic surface color, but has resulted in few image-computable models. Here we examine the performance of a recent artificial neural network architecture in a lightness matching task. We find similarities between the network’s behaviour and human perception. The network has human-like levels of partial lightness constancy, and its log reflectance matches are an approximately linear function of log illuminance, as is the case with human observers. We also find that previous computational models of lightness perception have much weaker lightness constancy than is typical of human observers. We briefly discuss some challenges and possible future directions for using artificial neural networks as a starting point for models of human lightness perception.

Color imaging has historically been treated as a phenomenon sufficiently described by three independent parameters. Recent advances in computational resources and in the understanding of the human aspects are leading to new approaches that extend the purely metrological view of color towards a perceptual approach in documents and displays. Part of this perceptual view is the incorporation of spatial aspects, adaptive color processing based on image content, and the automation of color tasks, to name a few. This dynamic nature applies to all output modalities, including hardcopy devices, but to an even larger extent to soft-copy displays with their even larger options of dynamic processing. Spatially adaptive gamut and tone mapping, dynamic contrast, and color management continue to support the unprecedented development of display hardware covering everything from mobile displays to standard monitors, and all the way to large size screens and emerging technologies. This conference provides an opportunity to present, to interact, and to learn about the most recent developments in color imaging researches, technologies and applications. Focus of the conference is on color basic research and testing, color image input, dynamic color image output and rendering, color image automation, emphasizing color in context and color in images, and reproduction of images across local and remote devices. The conference covers also software, media, and systems related to color. Special attention is given to applications and requirements created by and for multidisciplinary fields involving color and/or vision.

The conference on Human Vision and Electronic Imaging explores the role of human perception and cognition in the design, analysis, and use of electronic media systems. Over the years, it has brought together researchers, technologists, and artists, from all over the world, for a rich and lively exchange of ideas. We believe that understanding the human observer is fundamental to the advancement of electronic media systems, and that advances in these systems and applications drive new research into the perception and cognition of the human observer. Every year, we introduce new topics through our Special Sessions, centered on areas driving innovation at the intersection of perception and emerging media technologies.

Vision is a component of a perceptual system whose function is to support purposeful behavior. In this project we studied the perceptual system that supports the visual perception of surface properties through manipulation. Observers were tasked with finding dents in simulated flat glossy surfaces. The surfaces were presented on a tangible display system implemented on an Apple iPad, that rendered the surfaces in real time and allowed observers to directly interact with them by tilting and rotating the device. On each trial we recorded the angular deviations indicated by the device's accelerometer and the images seen by the observer. The data reveal purposeful patterns of manipulation that serve the task by producing images that highlight the dent features. These investigations suggest the presence of an active visuo-motor perceptual system involved in the perception of surface properties, and provide a novel method for its study using tangible display systems

Currently, no low cost commercial 3D active glasses with embedded eye tracker are available despite the importance of 3D and eye tracking for numerous applications. In this context, a simple low cost eye tracker for 3D glasses with liquid crystal shutters is presented and tested for orthoptics applications. By using a beam splitter to better align the camera with the line of sight when the subject looks at a target in front of him at far range, the new design allows recording high quality images with limited pupil deformation when compared to other commercial eye trackers where the cameras can be far from this axis (head mounted or fixed). Such a design could be useful for various applications from orthoptics to virtual reality

Human visual acuity strongly depends on environmental conditions. One of the most important physical parameters affecting its value is the pupil diameter, which follows changes in the surrounding illumination by adaptation. Thus, the direct measurement of its influence on visual performance would require either medicaments or inconvenient apertures placed in front of the subjects? eyes to examine different pupil sizes, so it has not been studied in detail yet. In order to analyze this effect directly, without any external intervention, we accomplished simulations by our complex neuro-physiological vision model. It considers subjects as ideal observers limited by optical and neural filtering, as well as neural noise, and represents character recognition by templatematching. Using the model, we reconstructed the monocular visual acuity of real subjects with optical filtering calculated from the measured wavefront aberration of their eyes. According to our simulations, 1 mm alteration in the pupil diameter causes 0.05 logMAR change in the visual acuity value on average. Our result is in good agreement with former clinical experience derived indirectly from measurements that had independently analyzed the effect of background illumination on pupil size and on visual quality.