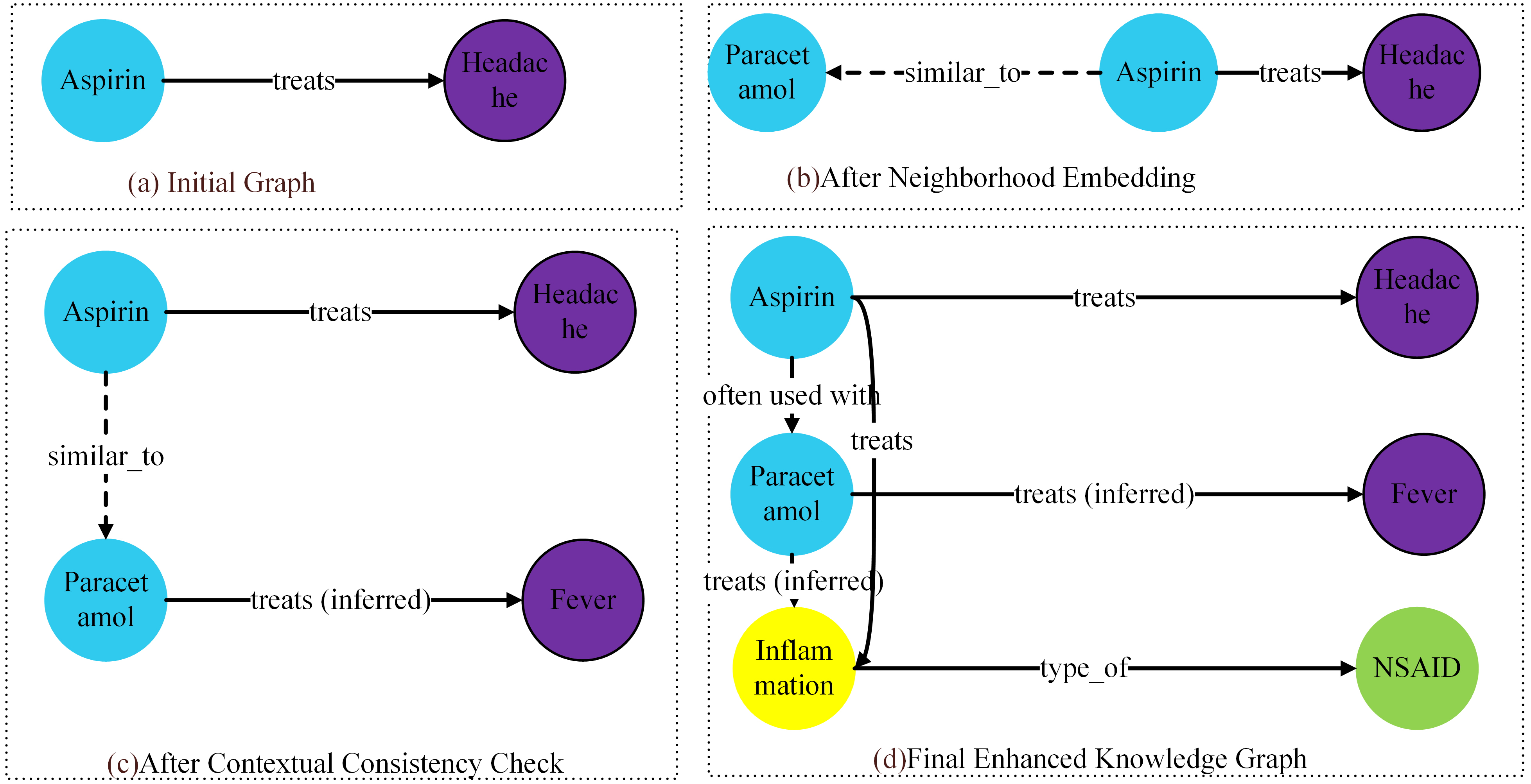

Knowledge graphs play a critical role in intelligent systems, but they face persistent challenges of incomplete data acquisition, noisy information, and inefficient inference under dynamic updates. To address these issues, the authors propose a graph-embedding-based framework that integrates three novel components: (1) a neighborhood-enhanced embedding module that captures richer structural semantics, (2) an inference optimization mechanism based on contextual consistency and confidence reweighting, and (3) a dynamic update strategy for efficient incremental learning. Extensive experiments on FB15k-237, WN18RR, and MedKG show clear improvements over state-of-the-art baselines. The proposed framework achieves Mean Reciprocal Rank gains of 8–15% and Hits@10 gains of 3–6%, demonstrating substantial accuracy improvements in link prediction. On dynamic update tasks, the proposed method maintains almost identical accuracy to full retraining (AUC difference < 0.2%) while achieving a 7.7-fold reduction in update time. These results verify that the proposed framework significantly enhances both the effectiveness and efficiency of knowledge graph reasoning.

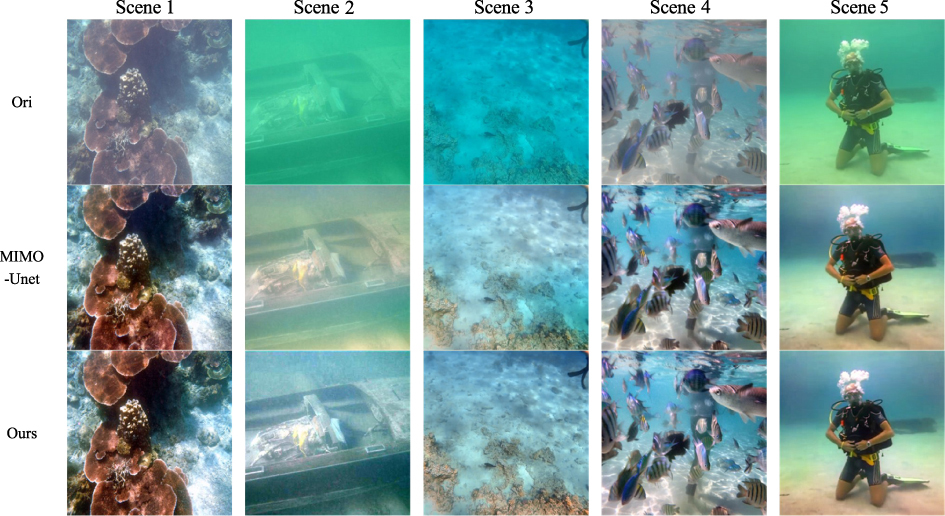

Underwater images are afflicted by dynamic blur, low illumination, poor contrast, and noise interference, hampering the accuracy of underwater robot proximity detection and its application in marine development. This study introduces a solution utilizing the MIMO-UNet network. The network integrates the Atrous Spatial Pyramid Pooling module between the encoder and the decoder to augment feature extraction and contextual information retrieval. Furthermore, the addition of a channel attention module in the decoder enhances detailed feature extraction. A novel technique combines multi-scale content loss, frequency loss, and mean squared error loss to optimize network weight updates, enhance high-frequency loss information, and ensure network convergence. The effectiveness of the method is assessed using the UIEB dataset. Ablation experiments confirm the efficacy and reasoning behind each module design while performance comparisons demonstrate the algorithm’s superiority over other underwater enhancement methods.

Sparse representation is the key part of shape registration, compression, and regeneration. Most existing models generate sparse representation by detecting salient points directly from input point clouds, but they are susceptible to noise, deformations, and outliers. The authors propose a novel alternative solution that combines global distribution probabilities and local contextual features to learn semantic structural consistency and adaptively generate sparse structural representation for arbitrary 3D point clouds. First, they construct a 3D variational auto-encoder network to learn an optimal latent space aligned with multiple anisotropic Gaussian mixture models (GMMs). Then, they combine GMM parameters with contextual properties to construct enhanced point features that effectively resist noise and geometric deformations, better revealing underlying semantic structural consistency. Second, they design a weight scoring unit that computes a contribution matrix to the semantic structure and adaptively generates sparse structural points. Finally, the authors enforce semantic correspondence and structural consistency to ensure that the generated structural points have stronger discriminative ability in both feature and distribution domains. Extensive experiments on shape benchmarks have shown that the proposed network outperforms state-of-the-art methods, with lower costs and more significant performance in shape segmentation and classification.

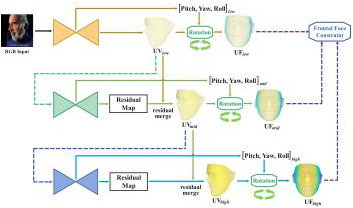

We propose an efficient multi-scale residual network that integrates 3D face alignment with head pose estimation from an RGB image. Existing methods excel in performing each task independently but often fail to acknowledge the interdependence between them. Additionally, these approaches lack a progressive fine-tuning process for 3D face alignment, which could otherwise require excessive computational resources and memory. To address these limitations, we introduce a hierarchical network that incorporates a frontal face constraint, significantly enhancing the accuracy of both tasks. Moreover, we implement a multi-scale residual merging process that allows for multi-stage refinement without compromising the efficiency of the model. Our experimental results demonstrate the superiority of our method compared to state-of-the-art approaches.

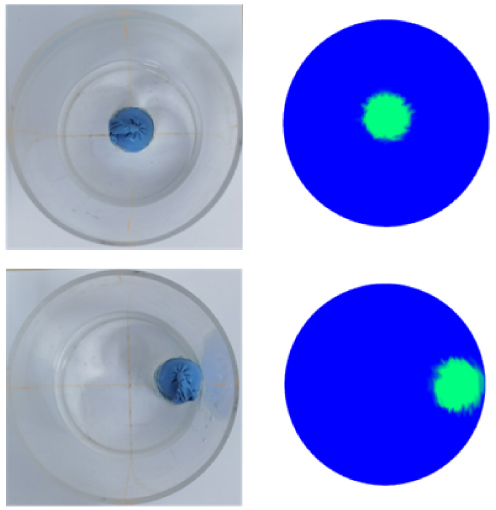

In recent years, deep learning has achieved excellent results in several applications across various fields. However, as the scale of deep learning models increases, the training time of the models also increases dramatically. Furthermore, hyperparameters have a significant influence on model training results and selecting the model’s hyperparameters efficiently is essential. In this study, the orthogonal array of the Taguchi method is used to find the best experimental combination of hyperparameters. This research uses three hyperparameters of the you only look once-version 3 (YOLOv3) detector and five hyperparameters of data augmentation as the control factor of the Taguchi method in addition to the traditional signal-to-noise ratio (S/N ratio) analysis method with larger-the-better (LB) characteristics.

Experimental results show that the mean average precision of the blood cell count and detection dataset is 84.67%, which is better than the available results in literature. The method proposed herein can provide a fast and effective search strategy for optimizing hyperparameters in deep learning.

Innovations in computer vision have steered research towards recognizing compound facial emotions, a complex mix of basic emotions. Despite significant advancements in deep convolutional neural networks improving accuracy, their inherent limitations, such as gradient vanishing/exploding problem, lack of global contextual information, and overfitting issues, may degrade performance or cause misclassification when processing complex emotion features. This study proposes an ensemble method in which three pre-trained models, DenseNet-121, VGG-16, and ResNet-18 are concatenated instead of utilizing individual models. It is a significant layer-sharing method, and we have added dropout layers, fully connected layers, activation functions, and pooling layers to each model after removing their heads before concatenating them. This enables the model to get a chance to learn more before combining the individual learned features. The proposed model uses an early stopping mechanism to prevent it from overfitting and improve performance. The proposed ensemble method surpassed the state-of-the-art (SOTA) with 74.4% and 71.8% accuracy on RAF-DB and CFEE datasets, respectively, offering a new benchmark for real-world compound emotion recognition research.

Deep learning (DL) has advanced computer-aided diagnosis, yet the limited data available at local medical centers and privacy concerns associated with centralized AI approaches hinder collaboration. Federated learning (FL) offers a privacy-preserving solution by enabling distributed DL training across multiple medical centers without sharing raw data. This article reviews research conducted from 2016 to 2024 on the use of FL in cancer detection and diagnosis, aiming to provide an overview of the field’s development. Studies show that FL effectively addresses privacy concerns in DL training across centers. Future research should focus on tackling data heterogeneity and domain adaptation to enhance the robustness of FL in clinical settings. Improving the interpretability and privacy of FL is crucial for building trust. This review promotes FL adoption and continued research to advance cancer detection and diagnosis and improve patient outcomes.

Image compression is an essential technology in image processing as it reduces video storage, which is increasingly popular. Deep learning-based image compression has made significant progress, surpassing traditional coding and decoding approaches in specific cases. Current methods employ autoencoders, typically consisting of convolutional neural networks, to map input images to lower-dimensional latent spaces for compression. However, these approaches often overlook low-frequency information, leading to sub-optimal compression performance. To address this challenge, this study proposed a novel image compression technique, Transformer and Convolutional Dual Channel Networks (TCDCN). This method extracts both edge detail and low-frequency information, achieving a balance between high and low-frequency compression. The study also utilized a variational autoencoder architecture with parallel stacked transformer and convolutional networks to create a compact representation of the input image through end-to-end training. This content-adaptive transform captured low-frequency information dynamically, leading to improved compression efficiency. Compared to the classic JPEG method, our model showed significant improvements in Bjontegaard Delta rate up to 19.12% and 18.65% on Kodak and CLIC test datasets, respectively. These improvements also surpassed the state-of-the-art solutions by notable margins of 0.47% and 0.74%, signifying a substantial enhancement in the image compression encoding efficiency. The results underscore the effectiveness of our approach in enhancing the capabilities of existing techniques, marking a significant step forward in the field of image compression.

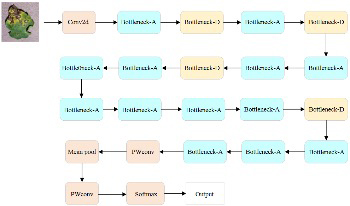

Crop diseases have always been a major threat to agricultural production, significantly reducing both yield and quality of agricultural products. Traditional methods for disease recognition suffer from high costs and low efficiency, making them inadequate for modern agricultural requirements. With the continuous development of artificial intelligence technology, utilizing deep learning for crop disease image recognition has become a research hotspot. Convolutional neural networks can automatically extract features for end-to-end learning, resulting in better recognition performance. However, they also face challenges such as high computational costs and difficulties in deployment on mobile devices. In this study, we aim to improve the recognition accuracy of models, reduce computational costs, and scale down for deployment on mobile platforms. Specifically targeting the recognition of tomato leaf diseases, we propose an innovative image recognition method based on a lightweight MCA-MobileNet and WGAN. By incorporating an improved multiscale feature fusion module and coordinate attention mechanism into MobileNetV2, we developed the lightweight MCA-MobileNet model. This model focuses more on disease spot information in tomato leaves while significantly reducing the model’s parameter count. We employ WGAN for data augmentation to address issues such as insufficient and imbalanced original sample data. Experimental results demonstrate that using the augmented dataset effectively improves the model’s recognition accuracy and enhances its robustness. Compared to traditional networks, MCA-MobileNet shows significant improvements in parameters such as accuracy, precision, recall, and F1-score. With a training parameter count of only 2.75M, it exhibits outstanding performance in recognizing tomato leaf diseases and can be widely applied in mobile or embedded devices.

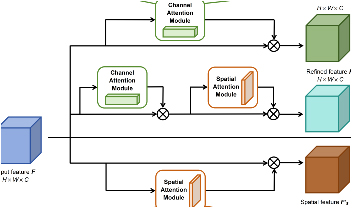

The rapid evolution of modern society has triggered a surge in the production of diverse waste in daily life. Effective implementation of waste classification through intelligent methods is essential for promoting green and sustainable development. Traditional waste classification techniques suffer from inefficiencies and limited accuracy. To address these challenges, this study proposed a waste image classification model based on DenseNet-121 by adding an attention module. To enhance the efficiency and accuracy of waste classification techniques, publicly available waste datasets, TrashNet and Garbage classification, were utilized for their comprehensive coverage and balanced distribution of waste categories. 80% of the dataset was allocated for training, and the remaining 20% for testing. Within the architecture of DenseNet-121, an enhanced attention module, series-parallel attention module (SPAM), was integrated, building upon convolutional block attention module (CBAM), resulting in a new network model called dense series-parallel attention neural network (DSPA-Net). DSPA-Net was trained and evaluated alongside other CNN models on TrashNet and Garbage classification. DSPA-Net demonstrated superior performance and achieved accuracies of 90.2% and 92.5% on TrashNet and Garbage classification, respectively, surpassing DenseNet-121 and alternative image classification algorithms. These findings underscore the potential for executing efficient and accurate intelligent waste classification.