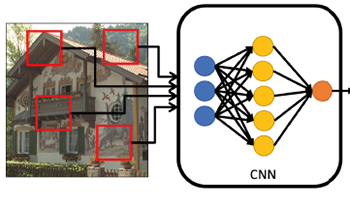

Feature-Product networks (FP-nets) are a novel deep-network architecture inspired by principles of biological vision. These networks contain the so-called FP-blocks that learn two different filters for each input feature map, the outputs of which are then multiplied. Such an architecture is inspired by models of end-stopped neurons, which are common in cortical areas V1 and especially in V2. The authors here use FP-nets on three image quality assessment (IQA) benchmarks for blind IQA. They show that by using FP-nets, they can obtain networks that deliver state-of-the-art performance while being significantly more compact than competing models. A further improvement that they obtain is due to a simple attention mechanism. The good results that they report may be related to the fact that they employ bio-inspired design principles.