We present a comparative study of pose-based vs. video-based Human Action Recognition (HAR) methods for driver monitoring in car cockpits. In this context, comparisons of neural network architectures from the field of deep learning-based video understanding are scarce. However, pose- and video-based HAR has significant potential for advanced driver-assistance systems in semi-autonomous driving on public roads. We compare prediction performance, per-class false-negative rate, model size, computational requirements, and inference latency on the established Drive&Act and the proprietary Driver Action Insight datasets. While the diversity and scale of available datasets make comparisons challenging, results suggest that both approaches benefit from pretraining, but pose- and video-based techniques perform differently for specific action classes, such as those that depend on body motion or the appearance of objects.

Autonomous vehicles currently rely on High-Definition (HD) maps for precise localization and path planning. However, traditional HD mapping approaches suffer from high costs, inherent rigidity, and slow update cycles, making them inadequate for dynamic urban environments. This paper presents a novel lightweight collaborative mapping architecture that enables real-time map updates through multi-agent cooperation. Our approach combines Joint Compatibility Branch and Bound (JCBB) for data association, Dempster-Shafer Theory (DST) for uncertainty quantification and landmark classification, and Extended Kalman Filter (EKF) for landmark pose estimation. Experimental validation using the CARLA simulator demonstrates accurate landmark classification and localization. Furthermore, collaborative data fusion reduces false positives and improves overall system reliability.

Accurate registration of subsea Light Detection and Ranging (LiDAR) point clouds is critical for offshore metrology, where millimeter-level errors can significantly impact operational cost and risk. This study evaluates automated registration methods for static scan positions acquired by Kraken Robotics systems. Two approaches were implemented in Open3D: a hierarchical tree-based Iterative Closest Point (ICP) method and a pose-graph multiway registration framework. The methods were tested on multiple real subsea datasets containing 19–33 high-density scans per subsea scene and on synthetic datasets generated with Digital Imaging and Remote Sensing Image Generation (DIRSIG) to enable ground-truth evaluation. Results show that multiway registration provides improved global consistency, lower adjacent-scan Root Mean Square Error RMSE, and reduced processing time compared to tree-based ICP. Ground-truth analysis demonstrated sub–sampling-level performance, corresponding to an expected 1–4 mm alignment accuracy for real datasets. Global coarse registration provided no measurable benefit for well-initialized static surveys. The final analysis demonstrates that multiway registration enables accurate, efficient, and fully automated subsea LiDAR alignment, reducing manual effort and improving metrology reliability.

LED flicker is a persistent artifact in imaging, where lights modulated via Pulse Width Modulation (PWM) above 90 Hz appear steady to humans but produce temporal intensity variations in captured video. While hardware mitigations like split-pixel architectures reduce flicker, they introduce a fundamental trade-off with motion blur. Progress in learned LED flicker mitigation (LFM) is currently hindered by a lack of public ground-truth datasets. We address this gap with ISET-LFM, an open-source physics-based simulation framework that models LED flicker in driving scenes. Built on the ISET ecosystem, our pipelinecombines camera motion simulation with an analytical flicker model to generate realistic dual-exposure frame sequences alongsideflicker-free ground truth. We provide a synthetic datasetof scene radiance, enabling benchmarking and training of LFMalgorithms across diverse sensor and ISP architectures. Thecode and dataset are available at: https: // github. com/ AyushJam/ iset-lfm and https: // purl. stanford. edu/ wd776hn7919 respectively.

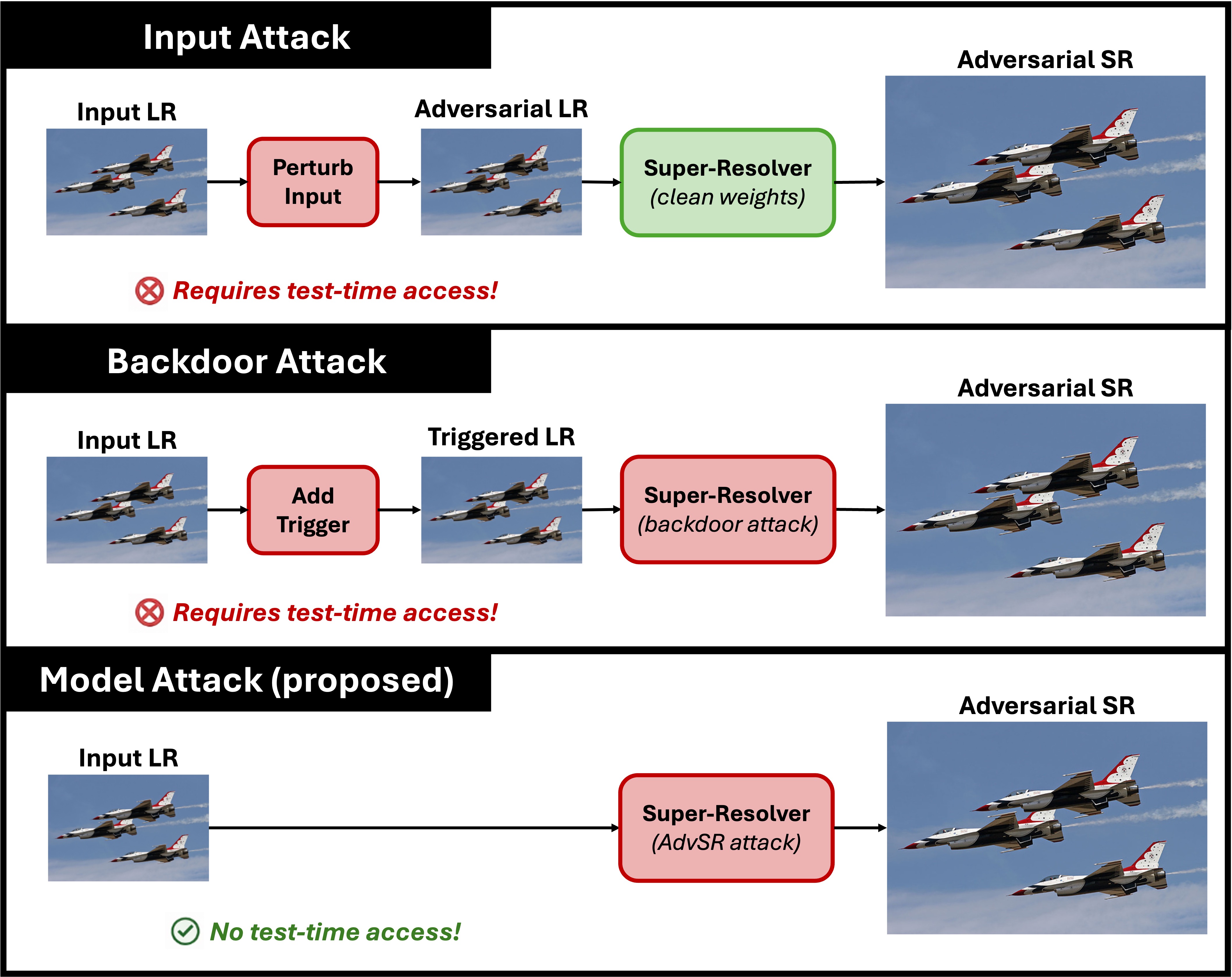

Data-driven super-resolution (SR) methods are often integrated into imaging pipelines as preprocessing steps to improve downstream tasks such as classification and detection. However, these SR models introduce a previously unexplored attack surface into imaging pipelines. In this paper, we present AdvSR, a framework demonstrating that adversarial behavior can be embedded directly into SR model weights during training, requiring no access to inputs at inference time. Unlike prior attacks that perturb inputs or rely on backdoor triggers, AdvSR operates entirely at the model level. By jointly optimizing for reconstruction quality and targeted adversarial outcomes, AdvSR produces models that appear benign under standard image quality metrics while inducing downstream misclassification. We evaluate AdvSR on three SR architectures (SRCNN, EDSR, SwinIR) paired with a YOLOv11 classifier and demonstrate that AdvSR models can achieve high attack success rates with minimal quality degradation. These findings highlight a new model-level threat for imaging pipelines, with implications for how practitioners source and validate models in safety-critical applications.

VR research rarely fails in dramatic ways. It frays in the margins. It slips. It drifts. A timestamp is off. A controller desyncs for a moment. A study crashes right as a participant reaches the final task. These moments feel small and forgettable, yet they accumulate. In this paper, we present an exploration into the fusion of Fulcrum, a generalized support system for user-facing studies, and a new and improved ScryVR, a Unity package built to support user-facing VR studies. The combined system offers clear templates, organized study structures, and simple building blocks that help researchers understand and adjust their experiments without getting lost in technical details. To evaluate this solution, two case studies were conducted with this setup as the main tool in their experiment. Ultimately, the system effectiveness greatly streamlined VR setup and automated many crucial aspects of experiment facilitation. However, limitations were made clear primarily in robust error reporting systems, which report in real time and a more diverse solution for analytical tasks.

Virtual Reality (VR) has recently attracted more attention in mental health applications due to its ability to immerse users in controlled and interactive environments. This paper presents a VR-Based AI Mental Health Companion, a multimodal system designed to support therapy, mindfulness, and real-time stress detection within immersive virtual reality (VR) environments. The system integrates artificial intelligence (AI) techniques by including natural language processing, emotion recognition, and physiological signal analysis by creating personalized mindfulness experiences and interactive meditation coaching. GPT-powered non-player characters (NPCs) are designed with specific therapeutic roles in mind, such as guided mindfulness facilitation, emotional support, and stress-aware conversational therapy. The system employs pose estimation to identify key body points and apply rule-based logic to assess posture accuracy during guided yoga exercises, providing real-time feedback to support correct movement execution. The work also includes biometric integration, such as EEG monitoring for enhanced emotional sensing. Expanding language support, increasing the diversity of pose datasets, and incorporating feedback from clinical professionals help refine the system. By combining immersive VR environments, GPT-driven therapeutic NPCs, and real-time posture validation, the proposed VR-Based AI Mental Health Companion demonstrates the potential of AI–VR convergence as a scalable approach to mental health care, with promising applications in stress management and preventative therapy.

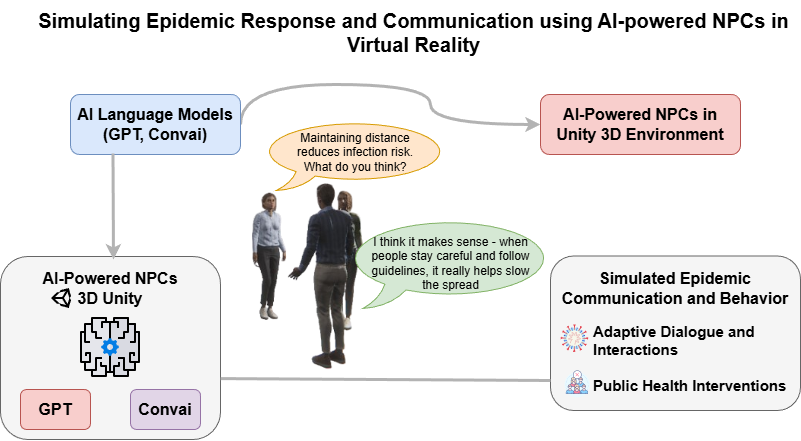

This study introduces a simulation framework designed to examine epidemic communication and behavioral interventions utilizing AI-driven non-player characters (NPCs) within a 3D environment created in Unity. The framework rectifies the limitations of conventional epidemiological models by integrating various agents that exhibit adaptive and context sensitive decision-making capabilities. Agents employ large language models (LLMs) and behavior trees to facilitate realistic conversations and responses in epidemic scenarios, contrasting with static rule-based systems. This results in interactions that closely resemble real-world human communication. The simulation enables real-time communication between agents and users in natural language. There are different ways that public health interventions, like social distance measures and communication attempts, can be used and evaluated. The technology enables agents to know what’s going on around them and how far away other people are, so they can act in the right way. The rendering engine in Unity makes the game more realistic, which makes it more interesting and useful. This study shows that agents were able to take part in COVID-19-related conversations using GPT and Convai and give appropriate answers to user questions. The framework makes it easy to do experiments on a large scale and can be used in many different public health settings. Future improvements will include simulating emotional states, making agents more diverse, and adding visual health indicators. This study introduces a scalable, ethical, and interactive instrument intended for researchers to examine human behavior, decision making, and intervention outcomes in simulated epidemic scenarios.

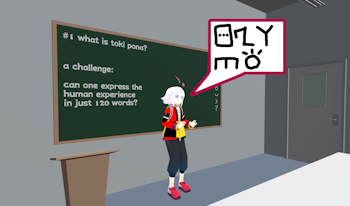

Monolingual learners frequently encounter barriers to language acquisition ranging from financial constraints to a lack of situational confidence. Virtual Reality (VR) offers a promising solution by providing a ”safe” digital environment for immersive learning experiences. This paper evaluates a comparative study between two distinct delivery methods of a language lecture within VR: a traditional video presentation and a 3D-modeled experience utilizing consumer-grade motion capture hardware. In addition, this paper provides a solution with cheap consumer motion capture hardware, addressing the financial block above, to create educational content. Overall, results were mixed; while both the video and motion capture versions yielded positive engagement, the video format demonstrated a more significant quantitative increase in results. As part of the evaluation, we analyze the performance of low-cost motion capture in educational content creation and propose design iterations to better isolate the variables influencing these learning gains.

This study aims to clarify the role of sound in evoking the sublime experience within a virtual reality (VR) environment. The sublime is a complex emotion combining awe and fear, arising from vast objects or overwhelming forces. VR is considered an effective medium for safely inducing this experience. However, existing research has predominantly focused on visual factors, and the influence of auditory stimuli—essential for immersion—remains insufficiently explored. In this study, a 3D 360° video of a volcanic crater was presented via a head-mounted display (HMD) under three auditory conditions: Silent, Normal (natural environmental sound), and Reverbed (processed sound). We evaluated the experience using subjective measures (Awe Experience Scale) and objective physiological measures (Electrodermal Activity, pupil diameter, gaze data). The results demonstrated that the presence of sound significantly amplified the sublime experience across both subjective and objective indices. Specifically, the Normal condition showed high integration with visual information, eliciting the strongest emotional arousal and significant pupil dilation. Conversely, while the Reverbed condition induced spatial exploratory behavior (gaze dispersion), it caused a sense of incongruence between sight and sound, tending to lower the quality of the experience. These findings suggest that audio-visual congruence is critical in designing sublime experiences in VR.