1.

Introduction

The appearance of light-permeable materials is usually characterized with adjectives

transparent and

translucent. The concepts of transparency and translucency are oftentimes used interchangeably in everyday life [

22]. However, conceptually they are understood to be different, even by the speakers of those languages, e.g. Japanese, that offer no clear lexical distinction between the two [

15,

24].

Optically, propagation of light in the material volume is characterized with the

radiative transfer equation (RTE)—in particular, wavelength-dependent coefficients of absorption (

σa) and scattering (

σs), as well as scattering phase function.

σa and

σs are usually specified in inverse scene units and indicate the distance a photon travels on average in a straight line within the material before it gets absorbed or scattered, respectively [

17]. The lower absorption and scattering are, the easier it is to see-through the material. Conversely, higher absorption coefficient means that less photons manage to go through the material, making the see-through image appear darker and decreased in contrast; and higher scattering coefficient means that more photons get redirected to different paths, less structure is preserved, and the see-through image appears more blurry. The scattering phase function characterizes distribution of directionalities after a scattering event. If absorption and scattering are large enough, or the object is thick enough (i.e. the distance a photon needs to travel is large and a likelihood of a scattering or absorption event is respectively larger), the background image can become indiscernible, but some degree of subsurface light transport might be still detectable (e.g. in materials such as wax, marble, or milk) [

17]. If no subsurface light transport is detectable, the material is said to be

opaque [

1].

The ASTM Standard Terminology of Appearance [

1] defines

transparency as

“the degree of regular transmission, thus the property of a material by which objects may be seen clearly through a sheet of it”, and

transparent as

“transmitting radiant energy without diffusion”. According to the same dictionary,

translucency is

“the property of a specimen by which it transmits light diffusely without permitting a clear view of objects beyond the specimen and not in contact with it”. According to Gerbino [

8],

“transparent substances, unlike translucent ones, transmit light without diffusing it”. In other words, the central distinction between transparency and translucency from the optical point of view is the magnitude of subsurface scattering (or the lack thereof). The CIE (International Commission on Illumination) emphasizes the perceptual aspect of it:

“if it is possible to see an object through a material, then that material is said to be transparent. If it is possible to see only a “blurred” image through the material (due to some diffusion effect), then it has a certain degree of transparency and we can speak about translucency” [

3,

4].

The primary distinction marked between transparent and translucent materials is the presence or absence of scattering, and the distinctness of the image seen through the material. Neither dictionary definitions, nor the state-of-the-art research in material appearance, provide more specific distinction, or objectively measurable boundary between the concepts of

transparency and

translucency. According to the CIE,

“translucency is a subjective term that relates to a scale of values going from total opacity to total transparency” [

3], also highlighting the lack of universal definition of

translucency. More standardized and objectively quantified concepts are

haze – resulting from wide angle (>2.5

) scattering and

“defined as a property of the material whereby objects viewed through it appear to be reduced in contrast”, and

clarity – associated with narrow angle (

) scattering, and

“defined in terms of the ability to perceive the fine detail of images through the material” [

26,

30]. While the contrast and blur differences between the images of the scene observed through a material and in a plain view can help us measure haze and clarity, also providing visual cues to transparency and translucency of see-through materials [

15,

29], not all translucent materials permit to see-through (e.g., above-mentioned wax and milk), and broad range of other cues, such as luminance contrast between specular and non-specular areas [

5,

24], and co-variation of 3D shape and shading [

20,

21] are used by the Human Visual System (HVS) for translucency perception (see [

15] for a comprehensive review).

Figure 1.

According to the

bell-shaped curve hypothesis [

13], translucency is not mutually exclusive with transparency and opacity, and as we move across the transparency-opacity spectrum from complete transparency, translucency gradually increases, reaches an yet undetermined peak, and then decreases reaching the complete opacity. The figure is reproduced from [

13].

![]()

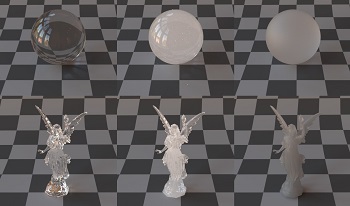

Figure 2.

According to the classification system for computer graphics proposed by Gerardin et al. [

7], increase in subsurface absorption gradually makes transparent materials opaque, but not translucent; increase in subsurface scattering makes materials translucent and eventually fully opaque; and increase in surface roughness makes transparent materials appear translucent, but not fully opaque.

![]()

Translucency is commonly considered to be a phenomenon

“between the extremes of complete transparency and complete opacity” [

4]. However, from optical and perceptual perspectives, it remains largely ambiguous how

transparency and

translucency, as well as

translucency and

opacity relate to each other, the boundaries between them, and whether transparency-translucency-opacity is a single continuum. The position paper by Gigilashvili et al. [

13] has been the first one to discuss this problem thoroughly:

“Can a material possess some degree of transparency and translucency, or some degree of translucency and opacity at the same time? When do transparent materials begin to be considered translucent, or when do translucent ones become opaque?”—they ask. Referring to previous works [

11,

12,

14], they conclude that perceptually, transparency and opacity are ranges of a spectrum, rather than extreme discrete points, and translucency can co-exist with them in the same stimulus. They argue that the conceptual boundary between transparency and translucency, as well as between translucency and opacity, is fuzzy rather than discrete, and hypothesize that the magnitudes of translucency and transparency-opacity are correlated with a bell-shaped curve (Figure

1), where translucency

“gradually increases, reaching a peak and then decreasing again while moving from transparency to opacity” [

13].

The boundary is fuzzy from optical perspective as well. The state-of-the-art research confirms that translucency is impacted by

σa,

σs, and roughness, but the exact nature of this impact remains to be investigated [

15]. For instance, what is the magnitude of subsurface scattering for the material to appear and to be considered translucent? Gerardin et al. [

7] proposed a cuboid classification system (Figure

2) for computer graphics applications, where subsurface absorption, subsurface scattering, and surface roughness – i.e. surface scattering are considered three axes. On the absorption axis, the materials range from transparent that become gradually opaque, but never translucent; on the subsurface scattering axis, transparent materials become gradually translucent as the scattering increases, and eventually opaque, if scattering is too high; and finally, on the roughness axis, transparent materials become gradually translucent, but they never reach full opacity, as

“there is always some light transmitted by the object” [

7].

Measurement, modeling, and reproduction of appearance of light permeable materials are important issues both in academia and industry alike (for instance, in 3D printing applications) [

15,

34]. The question whether different appearance attributes, such as, transparency, translucency, and opacity, are orthogonal, or whether they co-vary is important for material design applications [

13,

15]. Furthermore, disambiguation of the conceptual boundaries is essential to visual appearance research, as conceptual misinterpretations of translucency and transparency in psychophysical experiments has been reported [

13,

15], which can bias the experimental results as well as the scientific communication. For this purpose, we conducted two psychophysical experiments to quantify the magnitude of perceived transparency and perceived translucency of the stimuli and subsequently, to test Gaussian-like bell-shaped curve hypothesis by Gigilashvili et al. [

13]. Furthermore, the stimuli of varying

σa,

σs, and roughness was used in the experiments to evaluate how well Gerardin’s [

7] classification system of optical properties relates to perceptual attributes.

However, we hypothesize that the correlation between transparency and translucency, and the role of optical properties in that correlation, vary among shapes. This hypothesis is rooted in three observations made in the state-of-the-art works: first the amount of light that emerges from an object after subsurface light transport depends not only on

σa and

σs, but also on the thickness of the object—thin objects appearing more transmissive than thick objects made of the same material [

5,

15]; second, visibility of the transmission image, i.e. the magnitude of transparency and translucency depends not only on a micro-scale surface roughness, but on a macro-scale surface geometry as well—even if no subsurface scattering and absorption happen, and the surface is perfectly smooth on a microscopic level, background might not be still visible, if the shape of the object is complex (compare ability to see through between a flat window glass and a complex-shaped crystal vase) [

15]; third, the HVS has a poor ability to assess and invert optical processes in the scene, and rather relies on luminance distribution and other statistical regularities in the image, dubbed

image cues that are modulated by above-mentioned scale and geometry. For this reason, objects made of the identical material can considerably differ in appearance if they differ in size and shape—making Gigilashvili [

9] propose to refer to the visual appearance as an

object appearance problem rather than

material appearance. For this reason, we conducted the study on two different shapes—a simple and compact spherical object, and a complex Stanford Lucy [

32] object with a broad range of thickness distribution.

The contribution of this work is three-fold:

∙

We study the correlation between the magnitudes of translucency and transparency-opacity, and test the bell-shaped curve hypothesis proposed in previous works [

13,

15].

∙

We study how the magnitudes of perceived translucency and transparency are modulated by subsurface absorption and scattering, as well as surface roughness.

∙

We test the hypothesis that the correlations mentioned in the two previous points differ between the shapes.

The manuscript is organized as follows: in the next section, we present the research methodology. In the following section, we present the results, which is followed by discussion. Finally, we conclude and outline directions for future work.

Figure 3.

Bernhard Vogl’s museum environment map (also known as At the Window (Wells, UK)) [

2,

19] was used for rendering the visual stimuli, which was rotated with 180

to put the objects under side-lit illumination condition (refer to

Supplementary Material 3 for exact transformations).

![]()

4.

Discussion

The plots in Figs.

7–

8 show that the relation between transparency-opacity and translucency follows the hypothesized bell-shaped curve for a spherical object. With the Gaussian function, transparency score explains 71% of the variance in translucency evaluations. As hypothesized by Gigilashvili et al. [

13], translucency is not mutually exclusive with transparency and opacity, and the boundary between them is fuzzy rather than a discrete point. Perceived translucency peaks with medium transparency-opacity and is low for highly transparent and highly opaque objects. Interestingly, this hypothesis does not hold for Lucy shape. The variance in translucency scores is smaller for Lucy, and the tails of the curve are less visible. However, it is also worth mentioning that the range of transparency-opacity is also narrower for Lucy objects. In other words, while we can find spherical objects in our dataset that have been considered very transparent or very opaque, Lucy objects are usually considered neither very transparent nor very opaque, and even the ones nearer to the extremes of transparency-opacity axis have moderate translucency values. This can be attributed to shape and scale differences between sphere and Lucy. Sphere is a compact object with simple surface geometry that if scattering, absorption and roughness are low, permits to see through the scene behind it, while the Lucy made of the same transmissive smooth material does not permit seeing the background through as its complex surface geometry blurs, distorts and occludes background image (see marked red in Figure

11). On the other hand, if a sphere is highly absorbing and/or scattering, it occludes background completely, while similarly absorbing and scattering Lucy would block passage of light in its torso, but its thin parts, such as wings and hands (see marked yellow in Fig.

11), would still permit observing part of the background—thus, can be considered opaque and somewhat translucent at the same time. Furthermore, although the impact of optical properties on perceptual attributes of transparency, translucency, and opacity largely follow the cuboid representation proposed by Gerardin et al. [

7], the way optical properties affect perception differs between the shapes.

Figure 11.

Difference in image cues explain the cross-shape differences in the results. It is possible to see the background through a smooth sphere made of a material with low extinction coefficient (see Fig.

4). However, the same does not apply to Lucy. Its complex surface geometry distorts (marked with a red circle) or completely occludes (see the upper part of the torso in the left image) the background even if its micro-level roughness and extinction coefficients are low. On the other hand, complex shape provides broad range of cues. In the right image, the torso looks opaque while wings and hand appear translucent (marked with an yellow circle).

![]()

Even though the small number of shapes and materials do not permit us to generalize our findings, observed differences between a simple sphere and complex Lucy indicate that no universal model may exist in practice capable of characterizing transparency and translucency perception, as well as correlation among them solely based on optical properties. Observed differences between spherical and Lucy shapes have a practical relevance in material design, modeling, and cross-shape appearance reproduction. Even if we understand how manipulation of one perceptual attribute affects the other for a given object, it might not generalize to other shapes, and manipulation of transparency might have unintended effects on object’s translucency appearance, or the other way round. For instance, Lucy made of a highly transparent material can look more translucent than a spherical object either made of the same material or having the same apparent transparency. The state-of-the-art studies on translucency perception propose that the HVS relies on images cues [

5,

15,

38] that in addition to optical properties, are also modulated by illumination, scale, shape, and surface geometry of an object. The

bell-shaped curve hypothesis holds for objects with simple surface geometry that permit to see through if sufficiently smooth and transmissive, while a Gaussian function describes less of the variation in the data when an object with complex surface geometry and varying thickness is examined. Low number of samples did not permit us to model the correlation between optical and perceptual properties, but we demonstrated that even if this kind of model exists, it would not generalize to all objects, and should be tailored to each object’s shape, scale, and surface geometry (compare marked regions between the two plots in Fig.

10). For above-mentioned reasons, we believe that the relationship between transparency and translucency is more likely to be explained better from the perspective of image cues.

For spherical objects, we noticed three outliers with moderate transparency and very low translucency. All three objects turned out to have smooth surface, high extinction coefficient and low albedo. One of them is illustrated in Figure

12. As absorption process is dominant and there is little scattering, object looks dark and mostly opaque that does not include any cues to scattering and translucency. However, some photons manage to go through and focus on the right side of the sphere, forming a small caustic pattern (marked red in Fig.

12) that, if inspected carefully, might indicate to the presence of transmission that apparently made observers assess it as not highly opaque. This once again illustrates that perceptual considerations are rooted in image cues that are extremely challenging to be predicted and envisioned by knowledge of optical properties alone.

Figure 12.

A material with high extinction coefficient and low albedo turned out an outlier. Its translucency is considered low due to lack of scattering, but it is not considered fully opaque due the the caustic pattern observed on the right side (marked with a red circle).

![]()

We made one interesting observation that the highest translucency scores given by the observers is significantly lower than the maximum permitted by the scale (100). While we have stimuli considered very transparent or very opaque, no stimuli was considered very translucent. There can be two explanations for this. First, the limited range of the stimuli used in this experiment might not contain sufficiently translucent materials. However, we believe this could be attributed to the conceptual ambiguity of translucency. While the understanding of what are the extremes of opacity and transparency is more universal, the observers do not know what is the extreme or the peak of translucency, and the lack of this clear reference forces them take translucency assessment with care and leave the possibility that more translucent materials might exist on the scale.

Finally, the work comes with limitations that need to be considered: first, all findings reported above are limited to the small range of materials and shapes studied in this work. For instance, while we use isotropic phase function for all stimuli, the phase function alone [

18] can have a considerable impact on appearance, and broad range of materials need to be studied in the future. Second, even though we provided technical definitions of the concepts, these definitions still leave the room for subjective interpretation in terms of perception. For instance, similar to the reports in previous studies [

13,

14], the observers mentioned in post-experiment interviews that assessment of transparency, translucency and opacity is a challenging task when the object has varying thickness and the appearance differs strikingly between different parts of the same object (e.g. refer to Fig.

11). Besides, in this work, we assume that transparency and opacity are two mutually exclusive ends of the same continuum and increase in opacity automatically means decrease in transparency. In this regard, we rely on the technical definition [

1], which defines that opacity is

“the reciprocal of the transmittance factor”. However, the actual perceptual correlation between transparency and opacity can potentially be more complex than that.

Future work should address other important questions:

∙

If identical rendering parameters are used for different shapes, we could assess how consistent observer responses are across different shapes for a given material, which helps us understand the limits of appearance constancy and specifically, translucency constancy. For instance, it has been demonstrated previously that gloss constancy is limited, and identical material presented in different shapes does not look equally reflective [

25,

35]. This could eventually reveal whether observers assess appearance of a material, or that of a specific object (see

Section 4.2.6 in Ref. [

9] for a broader discussion on this topic).

∙

While this work is limited to still images, future work should consider using dynamic visual stimuli. Translucency, as a second-order visual attribute, which involves a complex analysis of interactions among object, scene, and illumination [

15], can be impacted by motion. Previous works have demonstrated that motion affects perception of color transparency [

6], it facilitates distinction between transparent and opaque materials [

31], and human observers oftentimes rely on motion in translucency assessment process when they are permitted to do so [

14].

∙

The question regarding the hypothetical maximum of translucency remains open. Future work should disambiguate this issue. One potential way of achieving this goal is by fixing the opposite extreme with a perfectly opaque material and increasing translucency gradually with small steps to investigate how perceptual distances from the opaque extremum and respective image cues change.

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed