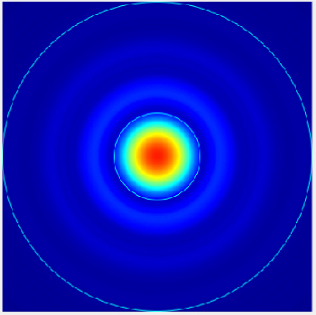

Image compression is an essential technology in image processing as it reduces video storage, which is increasingly popular. Deep learning-based image compression has made significant progress, surpassing traditional coding and decoding approaches in specific cases. Current methods employ autoencoders, typically consisting of convolutional neural networks, to map input images to lower-dimensional latent spaces for compression. However, these approaches often overlook low-frequency information, leading to sub-optimal compression performance. To address this challenge, this study proposed a novel image compression technique, Transformer and Convolutional Dual Channel Networks (TCDCN). This method extracts both edge detail and low-frequency information, achieving a balance between high and low-frequency compression. The study also utilized a variational autoencoder architecture with parallel stacked transformer and convolutional networks to create a compact representation of the input image through end-to-end training. This content-adaptive transform captured low-frequency information dynamically, leading to improved compression efficiency. Compared to the classic JPEG method, our model showed significant improvements in Bjontegaard Delta rate up to 19.12% and 18.65% on Kodak and CLIC test datasets, respectively. These improvements also surpassed the state-of-the-art solutions by notable margins of 0.47% and 0.74%, signifying a substantial enhancement in the image compression encoding efficiency. The results underscore the effectiveness of our approach in enhancing the capabilities of existing techniques, marking a significant step forward in the field of image compression.

Jianhua Hu, Guixiang Luo, Zhangjiang Yuan, Weimei Wu, Mengjun Ding, Wei Nie, Yaohua Liang, Xiangfei Feng, "Efficient Image Compression with Transformer and Convolutional Dual Channel Networks" in Journal of Imaging Science and Technology, 2025, pp 1 - 13, https://doi.org/10.2352/J.ImagingSci.Technol.2025.69.4.040504

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed