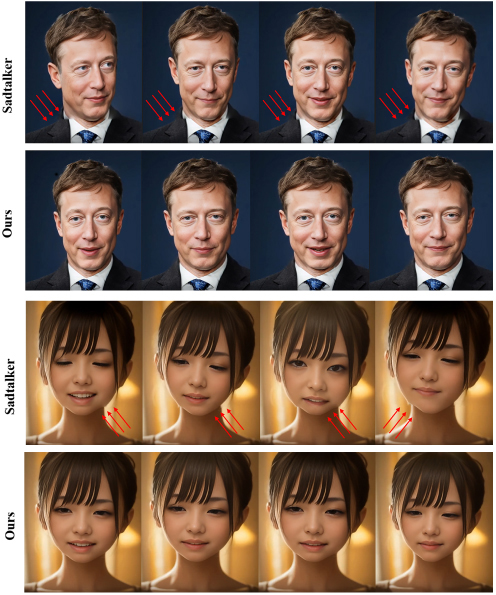

In the realm of audio-driven facial animation, most existing research predominantly focuses on head animations, and there is a scarcity of methods capable of generating full-body videos. The few approaches that can produce full-body videos usually concentrate solely on facial animations, resulting in the prevalent issue of head–body separation. This disjointedness seriously undermines the overall visual coherence and the naturalness of human–computer interaction. To overcome these limitations, the authors introduce SynPoseVAE, an enhanced version of the PoseVAE model. This method innovatively incorporates body-related information. SynPoseVAE effectively acquires detailed human pose data by adopting a bottom-up human pose estimation method to detect human key points and incorporates it into pose prediction, thereby solving the problem of head–body separation. Additionally, we design a new loss function that takes into account both head and body postures. It serves as a crucial regulator, enhancing the coordination between head and body movements. By optimizing based on this loss function, the model can significantly reduce the head–body separation problem, ensuring that the generated animations are more natural and coherent. Experimental results show that SynPoseVAE outperforms traditional methods. It can generate highly coordinated full-body animations, greatly improving the quality of human–computer interaction in the context of voice-driven facial animation synthesis.

Junyi Gao, Xiuting Tao, Yigang Wang, "SynPoseVAE: A Multimodal Fusion Framework for Audio-Driven Full-Body Animation Synthesis" in Journal of Imaging Science and Technology, 2026, pp 1 - 8, https://doi.org/10.2352/J.ImagingSci.Technol.2026.70.1.010404

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed