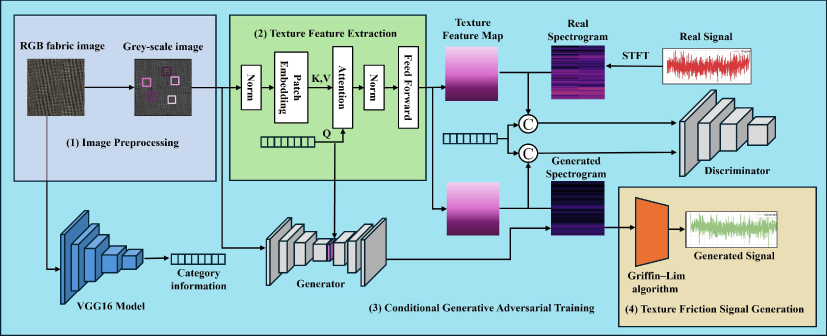

The technology of generating tactile data from visual modalities holds significant importance in cutting-edge fields such as tactile rendering, virtual reality, and robotics. This technology effectively bypasses the cumbersome process of manual tactile data collection and overcomes the limitations inherent in physical contact, thereby opening new avenues for advancement in related fields. However, current methods suffer from notable drawbacks: they struggle to ensure consistent and reliable results when generating tactile data across different categories, which greatly restricts their practical applications. To address this challenging problem, the authors have developed the T-CGAN cross-modal generation framework. Based on the FrictGAN architecture, this framework innovatively introduces an image category conditional constraint mechanism and texture feature extraction combined with the L1 loss function to precisely regulate the generation process and ensure high-quality output. Specifically, the framework can generate spectrograms of friction coefficient signals from fabric texture images and then convert these spectrograms into one-dimensional friction coefficient signals using the Griffin–Lim algorithm. During the research, the authors employed root mean square error and mean absolute error metrics to quantitatively analyze the differences among generated spectrograms, reconstructed signals, and their corresponding ground truths and conducted a comprehensive comparison with existing methods. Extensive experimental results demonstrate that this method significantly outperforms existing techniques in terms of both accuracy and stability, providing a superior solution for the field of tactile data generation.

Hui Yu, Yigang Wang, "T-CGAN: A Transformer-Embedded, Category-Conditioned Generative Adversarial Network" in Journal of Imaging Science and Technology, 2026, pp 1 - 9, https://doi.org/10.2352/J.ImagingSci.Technol.2026.70.1.010403

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed