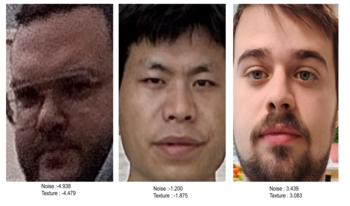

With the growing adoption of multimodal large language models (MLLMs) for image quality assessment, Vision–Language IQA systems such as DeQA-Score have demonstrated a strong correlation with human judgments on natural images. However, current MLLM-based quality predictors primarily provide global image quality scores and therefore lack the ability to quantitatively assess specific perceptual attributes such as noise, texture, contrast, and color factors that are essential for explainability and camera tuning. In this work, we extend DeQA-Score from global Mean Opinion Score (MOS)-based quality prediction to attribute-specific, Just Objectionable Difference (JOD)-based portrait assessment. Our study investigates how a MOS-trained model behaves when exposed to pairwise-annotated data and how lightweight adaptation can achieve perceptual alignment at the attribute level. Using a controlled mannequin dataset, we analyze the model’s baseline behavior under different prompt strategies and spatial input configurations, revealing limited attribute sensitivity. We then apply LoRA fine-tuning on realistic portrait data annotated for texture and noise quality. The adapted model achieves correlations of SRCC = 0.91/0.93 and PLCC =0.91/0.90 with JOD scores for noise and texture, respectively. Subsequent analysis confirms that the vision encoder is the main contributor to perceptual learning. The proposed framework establishes an efficient path for converting global VLM-based Image Quality Assessment (IQA) models into attribute-aware, perceptually aligned assessors for real-world photography.

Yujin Cho, Minh Khang Tran, Benoit Pochon, Jean-Michel Morel, Gabriele Facciolo, Sira Ferradans, "Adapting DeQA-score for Attribute-specific Portrait Quality Assessment" in Electronic Imaging, 2026, pp 179-1 - 179-8, https://doi.org/10.2352/EI.2026.38.12.GENAI-179

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed