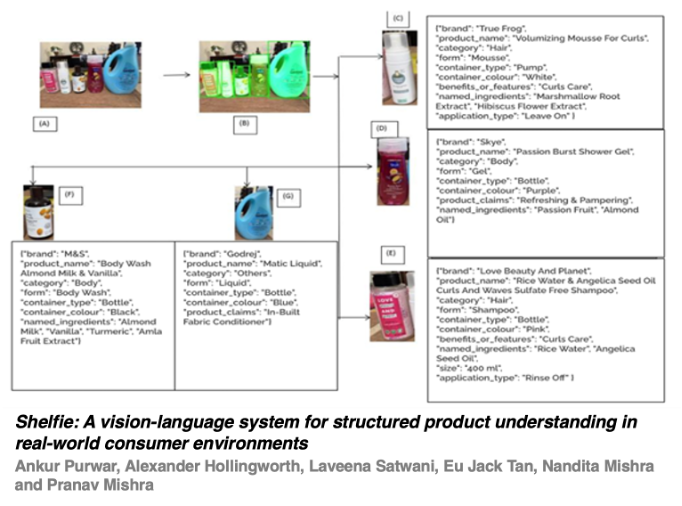

Personalized consumer experiences increasingly depend on understanding actual product usage in everyday settings. We present Shelfie, a consumer-centric vision-language system that extracts structured product metadata from user-submitted images of lifestyle care products arranged in real-world contexts – such as consumer shelves, countertops, and vanity spaces. Unlike conventional systems developed for controlled retail environments and dependent on barcode scanning, Shelfie is intentionally designed to operate effectively in cluttered, unconstrained home settings and is fully barcode-independent. Shelfie integrates object detection, instance segmentation and large language model (LLM)-based reasoning to infer rich metadata for each visible product. This includes product brand, name, category, form, package type, key ingredients, benefits, and size. The Shelfie system is trained and validated on a diverse user-sourced dataset covering personal care to home lifestyle products, demonstrating strong generalization in producing high-accuracy highly structured output across packaging styles and product categories. Shelfie establishes a vision-language foundation for real-world consumer-facing product understanding and discovery systems. It can enable downstream applications such as community-driven recommendation systems, ingredient sensitivity tracking, and indepth consumer behavior analysis all while keeping consumer habits, needs and convenience at the center. By bridging visual input with structured metadata output, Shelfie can enable more informed, personalized decisions through peer-driven insights.

Ankur Purwar, Alex Hollingworth, Laveena Satwani, Eu Jack Tan, Nandita Mishra, Pranav Mishra, "Shelfie: A Vision-language System for Structured Product Understanding in Real-world Consumer Environments" in Electronic Imaging, 2026, pp 177-1 - 177-9, https://doi.org/10.2352/EI.2026.38.12.GENAI-177

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed