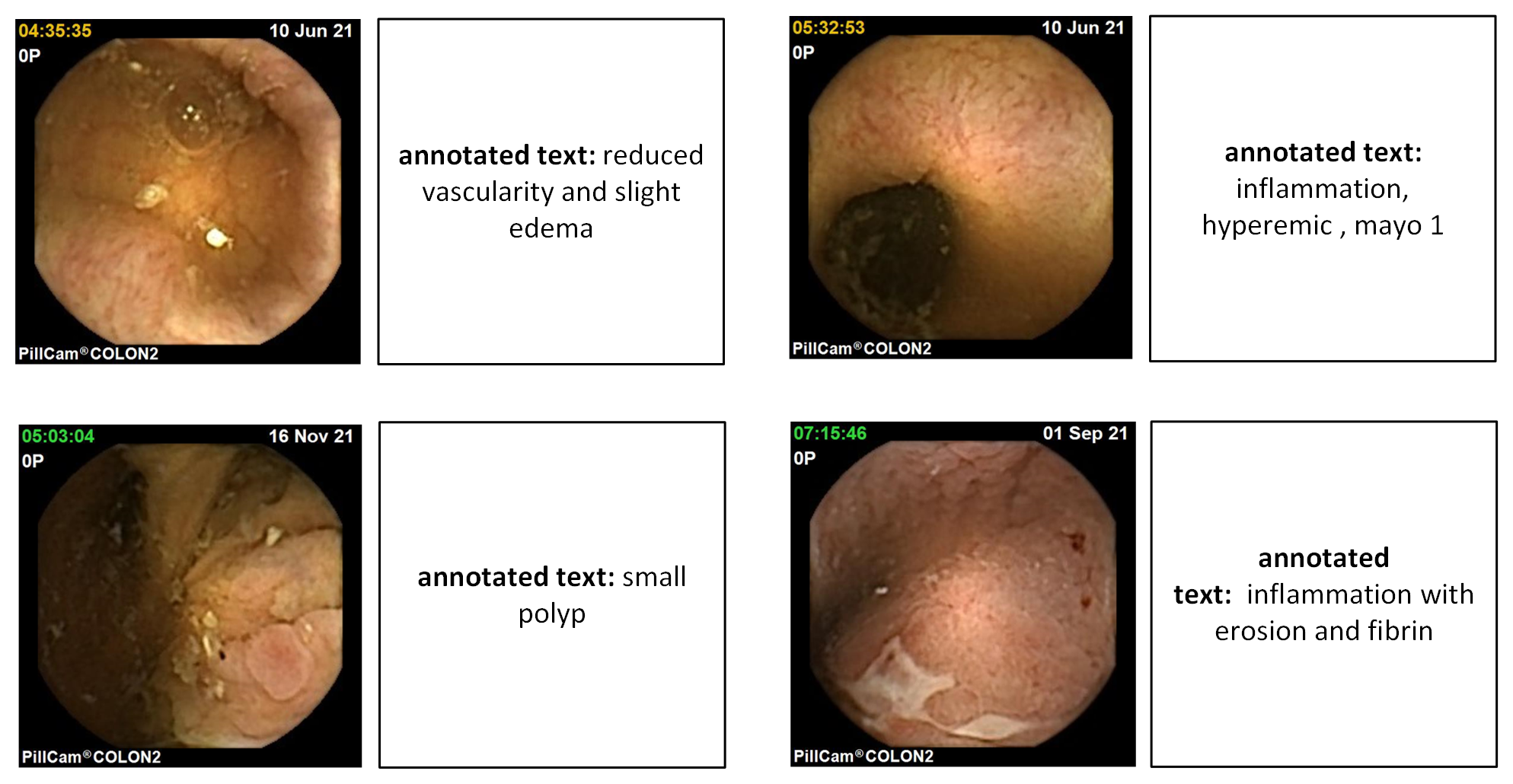

Wireless Capsule Endoscopy (WCE) is a minimally invasive diagnostic tool for examining the gastrointestinal tract, but the interpretation of large amounts of WCE image data demands extensive manual efforts and expert knowledge. Deep learning offers a promising approach to automate WCE data analysis, but training robust models is hindered by the scarcity of large-scale, high-quality labeled data in the WCE domain. This study explores the use of Contrastive Language-Image Pre-training (CLIP), a vision-language model pre-trained on extensive image-text pairs, to address these challenges in deep learning for WCE. We focus on caption retrieval and pathology classification tasks, using the CAPTIV8 dataset, a multi-modal WCE dataset containing image-diagnostic text pairs. After customizing the dataset for deep learning tasks, we conducted experiments comparing CLIP with state-of-the-art vision models. The results demonstrated that CLIP performs better than vision-only models, particularly in small-sample regimes such as one-shot and few-shot setups. By replacing the original CLIP loss with a KL-divergence loss, we further enhanced the model’s ability to handle multiple positive pairs in a mini-batch during the training, to further attune learning for this specific medical domain.

Lu Xu, Anuja Vats, Marius Pedersen, Kiran Raja, "Vision-language Learning for Wireless Capsule Endoscopy: Diagnostic Captioning with CLIP" in Electronic Imaging, 2026, pp 175-1 - 175-9, https://doi.org/10.2352/EI.2026.38.12.GENAI-175

Find this author on Google Scholar

Find this author on Google Scholar Find this author on PubMed

Find this author on PubMed