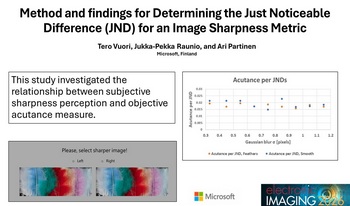

In camera product development, where the goal is to achieve the best possible image quality and user experience, it is necessary to use both objective and subjective test methods. Both methods have their own advantages and disadvantages. The goal of this study is to bring these methods closer together and help the user of objective tests understand the meaning of the test result for the end user. Objective image quality measures are fast and efficient. They form the basis for, for example, camera product comparisons daily basis. The comparison of two camera products is completed quickly and the result is reliable and repeatable. Based on the results provided by the measure, it is possible to rank any camera products easily. However, how big is the noticeable difference between two products for a user if such an objective measure is used? When is the difference significant? Or does the user notice the measured difference at all? In this study, we wanted to get answers to these questions for the acutance measure which is used daily basis. We conducted numerous subjective tests in a controlled lab with carefully chosen stimuli. To include the effect of image content in the study, we used both an image with a lot of detail and a test image with a lot of flat areas and little detail as test samples. Based on these subjective results, we calculated the corresponding Just Noticeable Difference (JND) values for our acutance measure. Results were slightly different to image content with flat areas versus image content with a lot of detail. This study presents methods and results for finding JND values for an objective acutance measure that can be more broadly generalized to all objective acutance measures and, in terms of the method, to all objective measures.

The Modulation Transfer Function (MTF) and the Noise Power Spectrum (NPS) characterize imaging system sharpness/resolution and noise, respectively. Both measures are based on linear system theory. However, they are applied routinely to scene-dependent systems applying non-linear, content-aware image signal processing. For such systems, MTFs/NPSs are derived inaccurately from traditional test charts containing edges, sinusoids, noise or uniform luminance signals, which are unrepresentative of natural scene signals. The dead leaves test chart delivers improved measurements from scene-dependent systems but still has its limitations. In this article, the authors validate novel scene-and-process-dependent MTF (SPD-MTF) and NPS (SPD-NPS) measures that characterize (i) system performance concerning one scene, (ii) average real-world performance concerning many scenes or (iii) the level of system scene dependency. The authors also derive novel SPD-NPS and SPD-MTF measures using the dead leaves chart. They demonstrate that the proposed measures are robust and preferable for scene-dependent systems to current measures.

We suggest a method for sharpening an image or video stream without using convolution, as in unsharp masking, or deconvolution, as in constrained least-squares filtering. Instead, our technique is based on a local analysis of phase congruency and hence focuses on perceptually important details. The image is partitioned into overlapping tiles, and is processed tile by tile. We perform a Fourier transform for each of the tiles, and define congruency for each of the components in such a way that it is large when the component's neighbours are aligned with it, and small otherwise. We then amplify weak components with high phase congruency and reduce strong components with low phase congruency. Following this method, we avoid strengthening the Fourier components corresponding to sharp edges, while amplifying those details that underwent a slight or moderate defocus blur. The tiles are then seamlessly stitched. As a result, the image sharpness is improved wherever perceptually important details are present.