Solid state optical sensors and solid state cameras have established themselves as the imaging systems of choice for many demanding professional applications such as automotive, space, medical, scientific and industrial applications. The advantages of low-power, low-noise, high-resolution, high-geometric fidelity, broad spectral sensitivity, and extremely high quantum efficiency have led to a number of revolutionary uses. ISS focuses on image sensing for consumer, industrial, medical, and scientific applications, as well as embedded image processing, and pipeline tuning for these camera systems. This conference will serve to bring together researchers, scientists, and engineers working in these fields, and provides the opportunity for quick publication of their work. Topics can include, but are not limited to, research and applications in image sensors and detectors, camera/sensor characterization, ISP pipelines and tuning, image artifact correction and removal, image reconstruction, color calibration, image enhancement, HDR imaging, light-field imaging, multi-frame processing, computational photography, 3D imaging, 360/cinematic VR cameras, camera image quality evaluation and metrics, novel imaging applications, imaging system design, and deep learning applications in imaging.

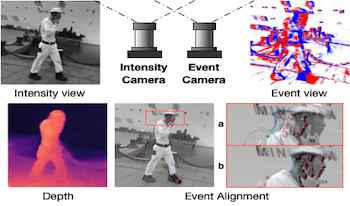

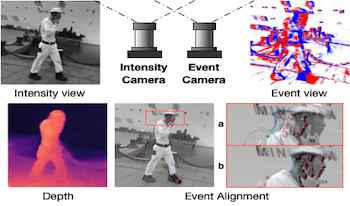

Event cameras are novel bio-inspired vision sensors that output pixel-level intensity changes in microsecond accuracy with high dynamic range and low power consumption. Despite these advantages, event cameras cannot be directly applied to computational imaging tasks due to the inability to obtain high-quality intensity and events simultaneously. This paper aims to connect a standalone event camera and a modern intensity camera so that applications can take advantage of both sensors. We establish this connection through a multi-modal stereo matching task. We first convert events to a reconstructed image and extend the existing stereo networks to this multi-modality condition. We propose a self-supervised method to train the multi-modal stereo network without using ground truth disparity data. The structure loss calculated on image gradients is used to enable self-supervised learning on such multi-modal data. Exploiting the internal stereo constraint between views with different modalities, we introduce general stereo loss functions, including disparity cross-consistency loss and internal disparity loss, leading to improved performance and robustness compared to existing approaches. Our experiments demonstrate the effectiveness of the proposed method, especially the proposed general stereo loss functions, on both synthetic and real datasets. Finally, we shed light on employing the aligned events and intensity images in downstream tasks, e.g., video interpolation application.

Event cameras are novel bio-inspired vision sensors that output pixel-level intensity changes in microsecond accuracy with high dynamic range and low power consumption. Despite these advantages, event cameras cannot be directly applied to computational imaging tasks due to the inability to obtain high-quality intensity and events simultaneously. This paper aims to connect a standalone event camera and a modern intensity camera so that applications can take advantage of both sensors. We establish this connection through a multi-modal stereo matching task. We first convert events to a reconstructed image and extend the existing stereo networks to this multi-modality condition. We propose a self-supervised method to train the multi-modal stereo network without using ground truth disparity data. The structure loss calculated on image gradients is used to enable self-supervised learning on such multi-modal data. Exploiting the internal stereo constraint between views with different modalities, we introduce general stereo loss functions, including disparity cross-consistency loss and internal disparity loss, leading to improved performance and robustness compared to existing approaches. Our experiments demonstrate the effectiveness of the proposed method, especially the proposed general stereo loss functions, on both synthetic and real datasets. Finally, we shed light on employing the aligned events and intensity images in downstream tasks, e.g., video interpolation application.

Solid state optical sensors and solid state cameras have established themselves as the imaging systems of choice for many demanding professional applications such as automotive, space, medical, scientific and industrial applications. The advantages of low-power, low-noise, high-resolution, high-geometric fidelity, broad spectral sensitivity, and extremely high quantum efficiency have led to a number of revolutionary uses. The conference will focus on image sensing topics as listed below, bringing together researchers, scientists, and engineers working in these fields, offering the opportunity for quick publication of their work.

Image captioning generates text that describes scenes from input images. It has been developed for high-quality images taken in clear weather. However, in bad weather conditions, such as heavy rain, snow, and dense fog, poor visibility as a result of rain streaks, rain accumulation, and snowflakes causes a serious degradation of image quality. This hinders the extraction of useful visual features and results in deteriorated image captioning performance. To address practical issues, this study introduces a new encoder for captioning heavy rain images. The central idea is to transform output features extracted from heavy rain input images into semantic visual features associated with words and sentence context. To achieve this, a target encoder is initially trained in an encoder-decoder framework to associate visual features with semantic words. Subsequently, the objects in a heavy rain image are rendered visible by using an initial reconstruction subnetwork (IRS) based on a heavy rain model. The IRS is then combined with another semantic visual feature matching subnetwork (SVFMS) to match the output features of the IRS with the semantic visual features of the pretrained target encoder. The proposed encoder is based on the joint learning of the IRS and SVFMS. It is trained in an end-to-end manner, and then connected to the pretrained decoder for image captioning. It is experimentally demonstrated that the proposed encoder can generate semantic visual features associated with words even from heavy rain images, thereby increasing the accuracy of the generated captions.

Traditionally, the appearance of an object in an image is edited to elicit a preferred perception. However, the editing method might be arbitrary and might not consider the human perception mechanism. In this study, the authors explored image-based leather “authenticity” editing using an estimation model that considers a perception mechanism derived in their previous work. They created leather rendered images by emphasizing or suppressing image properties corresponding to the “authenticity.” Subsequently, they performed two subjective experiments, one using fully edited images and another using partially edited images whose specular reflection intensity was constant. Participants observed the leather rendered images and evaluated the differences in the perception of “authenticity.” The authors found that the “authenticity” perception could be changed by manipulating the intensity of specular reflection and the texture (grain and surface irregularity) in the images. The results of this study could be used to tune the properties of images to make them more appealing.