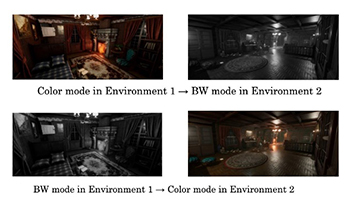

Diversity in visual perception, particularly due to age and color vision types, has been widely studied in fields such as cognitive psychology and human-computer interaction. Variations in color perception, such as those arising from dichromatic or trichromatic vision, can significantly influence object-finding tasks and overall performance in virtual environments. Recent studies suggest that while Virtual Reality (VR) environments can be designed with universal accessibility, understanding how individuals process visual cues in these environments remains underexplored. This study investigates how individuals with diverse visual perception perform object-finding tasks in a VR environment. As part of a pilot study, participants completed tasks in two types of VR environments: one rendered in full color and the other in grayscale. The aim was to explore how different visual settings might support future research involving a more diverse participant group. While preliminary results indicated better performance in the color environment across participants, the primary focus was to assess the suitability of the experimental design and environment setup for investigating sensory interactions in VR. By understanding how visual differences affect performance, designers can create more inclusive virtual spaces for uses like education, gaming, and training. This interdisciplinary approach, combining elements of psychology, sensory studies, and technology design, suggests a more inclusive framework for addressing diversity in VR environments. By systematically analyzing visual perception, the study not only advances academic understanding but also provides a practical roadmap for implementing inclusive VR solutions across industries.

Visual saliency estimation aims at identifying and localizing the areas of images and videos that are attractive for a human subject. In this work, a novel approach for estimating the visual saliency in omnidirectional images is proposed. It is based on the identification of low-level image feature descriptors (i.e., the presence of texture, edges, etc.) coupled with the information about the local depth of the scene. To evaluate the performances of the proposed method, the estimated saliency map is compared with the available ground truth through two objective metrics: the correlation coefficient and the Kullback-Leibler divergence. The analysis of the achieved results confirms the validity of the proposed approach.