This paper introduces a novel framework for generating high-quality images from “visual sentences” extracted from video sequences. By combining a lightweight autoregressive model with a Vector Quantized Generative Adversarial Network (VQGAN), our approach achieves a favorable trade-off between computational efficiency and image fidelity. Unlike conventional methods that require substantial resources, the proposed framework efficiently captures sequential patterns in partially annotated frames and synthesizes coherent, contextually accurate images. Empirical results demonstrate that our method not only attains state-of-the-art performance on various benchmarks but also reduces inference overhead, making it well-suited for real-time and resource-constrained environments. Furthermore, we explore its applicability to medical image analysis, showcasing robust denoising, brightness adjustment, and segmentation capabilities. Overall, our contributions highlight an effective balance between performance and efficiency, paving the way for scalable and adaptive image generation across diverse multimedia domains.

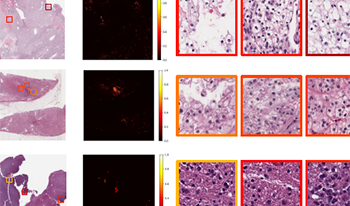

Multi-modal learning adeptly integrates visual and textual data, but its application to histopathology image and text analysis remains challenging, particularly with large, high-resolution images like gigapixel Whole Slide Images (WSIs). Current methods typically rely on manual region labeling or multi-stage learning to assemble local representations (e.g., patch-level) into global features (e.g., slide-level). However, there is no effective way to integrate multi-scale image representations with text data in a seamless end-to-end process. In this study, we introduce Multi-Level Text-Guided Representation End-to-End Learning (mTREE). This novel text-guided approach effectively captures multi-scale WSI representations by utilizing information from accompanying textual pathology information. mTREE innovatively combines – the localization of key areas (“global-tolocal”) and the development of a WSI-level image-text representation (“local-to-global”) – into a unified, end-to-end learning framework. In this model, textual information serves a dual purpose: firstly, functioning as an attention map to accurately identify key areas, and secondly, acting as a conduit for integrating textual features into the comprehensive representation of the image. Our study demonstrates the effectiveness of mTREE through quantitative analyses in two image-related tasks: classification and survival prediction, showcasing its remarkable superiority over baselines. Code and trained models are made available at https://github.com/hrlblab/mTREE.