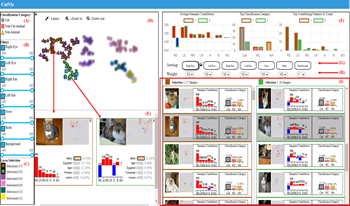

Given a picture classified as a Persian cat by an AI model, users may ask questions such as, “What are the contributions of the eyes and ears to the classification result?” or “Which features contribute the most?” While existing post-hoc XAI methods effectively explain model predictions at the pixel or patch level, they are limited in directly quantifying the contributions of human-interpretable semantic features. In this paper, we propose a visual analytics approach for feature-level interpretation of image classification results. Our contributions are twofold. First, we introduce a semantic contribution quantification method that builds upon existing pixel-level attribution techniques (e.g., Layer-wise Relevance Propagation, Grad-CAM). Specifically, we aggregate and normalize pixel-level relevance scores over predefined semantic regions (such as eyes, ears, and body) to compute comparable contribution scores for each semantic feature within an image. Second, we present an interactive visual interface that leverages these quantified semantic feature contributions to support exploration, comparison, and analysis of AI outputs across image collections. Through illustrative scenarios and expert feedback, we demonstrate that our approach provides an intuitive, scalable, and semantically meaningful means to interpret image classification explanations.

As more and more college classrooms utilize online platforms to facilitate teaching and learning activities, analyzing student online behaviors becomes increasingly important for instructors to effectively monitor and manage student progress and performance. In this paper, we present CCVis, a visual analytics tool for analyzing the course clickstream data and exploring student online learning behaviors. Targeting a large college introductory course with over two thousand student enrollments, our goal is to investigate student behavior patterns and discover the possible relationships between student clickstream behaviors and their course performance. We employ higher-order network and structural identity classification to enable visual analytics of behavior patterns from the massive clickstream data. CCVis includes four coordinated views (the behavior pattern, behavior breakdown, clickstream comparative, and grade distribution views) for user interaction and exploration. We demonstrate the effectiveness of CCVis through case studies along with an ad-hoc expert evaluation. Finally, we discuss the limitation and extension of this work.

Sleep plays an important role in the overall health and wellbeing of a child. The relationship between sleep and daytime behaviours of children with neurodevelopmental disorders is understood poorly; different aspects of a child’s routine may interact with each other to contribute to sleep disorders. In order to diagnose, monitor and successfully treat many medical conditions pertaining to sleep, it becomes imperative to analyse the many aspects of a child’s daytime and sleep behaviours. In this paper, we propose a visual analytic tool for studying the interaction of different variables collected for a child with neurodevelopmental disorders. We propose a visual analytic tool which allows clinicians to explore how the different aspects of a child’s behaviour and activities affect their sleep. This tool is developed as an extension of an existing tool SWAPP, which allows caregivers and clinicians to log and monitor the child’s everyday data.