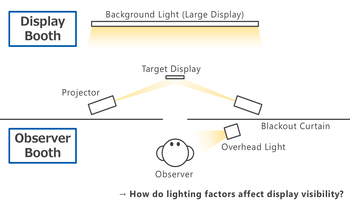

Mobile displays are used across a wide range of lighting conditions. Readability and visual comfort vary greatly depending on the surrounding light environment as well as the luminance of the display. To evaluate effects of the light environment, we developed a two-booth experimental system capable of independently manipulating three factors: the illuminance on display surface, the luminance behind the display and the ambient illuminance in the user's space. Participants viewed black text on a white screen of a smartphone under various light conditions, rating its readability, discomfort glare, and screen comfort across multiple luminance levels. The results demonstrated that all three factors affect visual perception. Especially, the illuminance on the screen had the most powerful effect on readability, while the factors interacted, offsetting each other's effects. In addition, we confirmed that such effects depend on the observer’s light-dark adaptation state. These findings indicate that visual perception of mobile displays can be determined not by individual factors, but by their complex combination.

Cameras sensors are crucial for autonomous driving as they are the only sensing modality that provide measured color information of the surrounding scene. Cameras are directly exposed to external weather conditions where visibility is dramatically affected due to various reasons such as rain, ice, fog, soil, ..etc. Hence, it is crucial to detect and remove the visibility degradation caused by the harsh weather conditions. In this paper, we focus mainly on soiling degradation. We provide methods for classification of the soiled parts as well as methods for estimating the scene behind the soiled parts. A new dataset is created providing manually annotated soiled masks knows as WoodScape dataset to encourage research in that area.