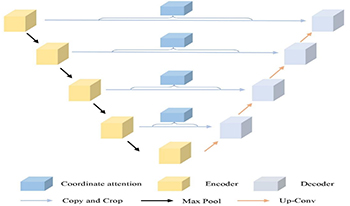

The maintenance of critical transportation infrastructure such as roads, tunnels, and bridges depends heavily on timely and accurate detection of structural damage. Among various types of surface defects, concrete cracks are the most common and dangerous. However, traditional manual inspection methods are inefficient, labor-intensive, and pose safety risks. In this paper, the authors propose UC-Net, a novel semantic segmentation network specifically designed for concrete crack detection. The UC-Net builds upon the U-Net architecture and introduces a Coordinate Attention mechanism into skip connections, enabling the model to better capture long, narrow, and spatially scattered crack features while suppressing irrelevant background noise. This design addresses two key challenges: the small spatial proportion of cracks in high-resolution images and the interference from complex textures and lighting conditions. To validate the effectiveness of this approach, the authors conducted extensive experiments on a publicly available crack dataset and real-world images. Compared with existing CNN- and attention-based networks, UC-Net achieves superior performance, with improvements in IOU (up to 91.78%) and accuracy (89.33%). The results confirm that UC-Net provides a lightweight yet accurate solution for fine-grained crack segmentation, and it can serve as a practical tool in real-world infrastructure monitoring scenarios.

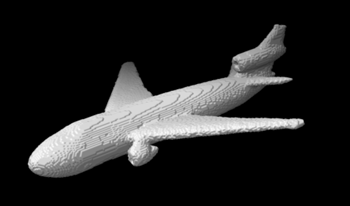

In this paper, we introduce silhouette tomography, a novel formulation of X-ray computed tomography that relies only on the geometry of the imaging system. We formulate silhouette tomography mathematically and provide a simple method for obtaining a particular solution to the problem, assuming that any solution exists. We then propose a supervised reconstruction approach that uses a deep neural network to solve the silhouette tomography problem. We present experimental results on a synthetic dataset that demonstrate the effectiveness of the proposed method.

Bidirectional Texture Function (BTF) is one of the methods to reproduce realistic images in Computer Graphics (CG). This is a technique that can be applied to texture mapping with changing lighting and viewing directions and can reproduce realistic appearance by a simple and high-speed processing. However, in the BTF method, a large amount of texture data is generally measured and stored in advance. In this paper, in order to address the problems related to the measurement time and the texture data size in the BTF reproduction, we a method to generate a BTF image dataset using deep learning. We recovery texture images under various azimuth lighting conditions from a single texture image. For achieving this goal, we applied the U-Net to our BTF recovery. The restored and original texture images are compared using SSIM. It will be confirmed that the reproducibility of fabric and wood textures is high.