Color-accurate digital imaging is critical for agricultural phenotyping, but the scientific literature predominantly assumes the availability of linear RAW sensor data. In some commercial workflows, only standard 8-bit sRGB JPEG images are available, which poses a significant challenge due to their non-linear encoding and information loss from in-camera processing. This paper presents a robust color correction pipeline designed specifically for such non-linear images captured in uncontrolled outdoor environments. Our core contribution is a novel, per-patch adaptive weighting scheme for least-squares color correction. Instead of deriving a single global correction, our method generates a unique transformation for each patch on an in-frame ColorChecker. This is achieved through a leave-one-out approach where, for each target patch, a model is trained on the remaining 23 patches. Crucially, this training is guided by a weighting matrix customized for the target patch. This adaptive process allows a simple linear model to outperform more complex polynomials. Through systematic evaluation on an unseen test set, we demonstrate this method reduces the mean color error ΔE00 from 11.23 to 3.79, providing a practical and effective solution for real-world agricultural imaging.

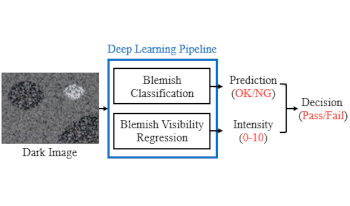

Nowadays, the quality of low-light pictures is becoming a competitive edge in mobile phones. To ensure this, the necessity to filter out dark defects that cause abnormalities in dark photos in advance is emerging, especially for dark blemish. However, high manpower is required to separate dark blemish patterns due to the low consistency problem of the existing scoring method. This paper proposes a novel deep learning-based screening method to solve this problem. The proposed pipeline uses two ResNet-D models with different depths to perform classification and regression of visibility, respectively. Then it derives a new score that combines the outputs of both models into one. In addition, we collect the large-scale image set from real manufacturing processes to train models and configure the dataset with two types of label systems suitable for each model. Experimental results show the performance of the deep learning models trained and validated with the presented datasets. Our classification model has significantly improved screening performance with respect to its accuracy and F1-score compared to the conventional handcraft method. Also, the visibility regression method shows a high Pearson correlation coefficient with 30 expert engineers, and the inference output of our regression model is consistent with it.

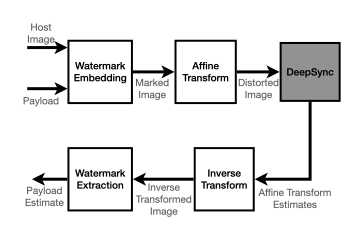

In the context of digital watermarking of images/video, template based techniques rely on the insertion of a signal template to aid recovery of the watermark after transforms (rotation, scale, translation, aspect-ratio) common in imaging workflows. Detection approaches for such techniques often rely on known signal properties when performing geometry estimation before watermark extraction. In deep watermarking, i.e., watermarking employing deep learning, focus so far has been on extraction methods that are invariant to geometric transforms. This results in a gap in precise geometry recovery and synchronization which compromises watermark recovery, including the recovery of information bits, i.e., the payload. In this work, we propose DeepSync, a novel deep learning approach aimed at enhancing watermark synchronization for both template-based and deep watermarks.