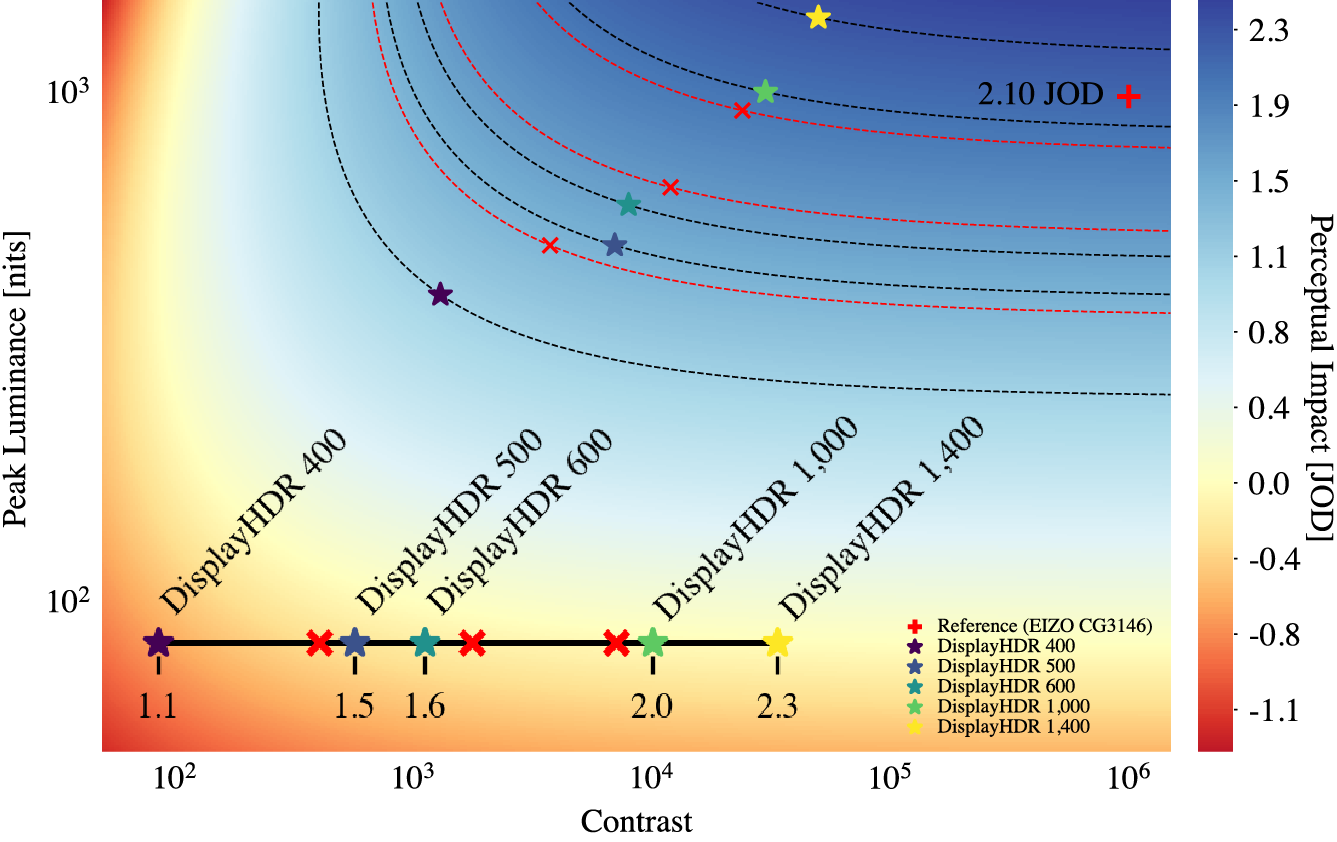

Characterization of a high dynamic range (HDR) display’s performance can be largely defined by its contrast and peak luminance. Prior work has studied this question for virtual reality (VR) using a haploscopic HDR setup, but it is not obvious if those results are transferrable to a more traditional viewing setting, such as direct view. In this work, we conducted a study to measure user preference for different contrast and peak luminance parameters in this scenario, and develop a perceptual just-objectionable-difference (JOD) scale to quantify preference scores. This is accomplished by studying contrast and peak luminance conditions across several orders of magnitude, shown on a professional HDR display with peak luminance of 1,000 nits and 1,000,000:1 contrast. The data is used to develop a computational model that can drive display design and future standardization of the definition of HDR, in terms of human preference.

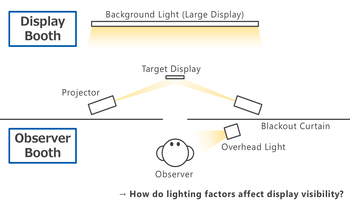

Mobile displays are used across a wide range of lighting conditions. Readability and visual comfort vary greatly depending on the surrounding light environment as well as the luminance of the display. To evaluate effects of the light environment, we developed a two-booth experimental system capable of independently manipulating three factors: the illuminance on display surface, the luminance behind the display and the ambient illuminance in the user's space. Participants viewed black text on a white screen of a smartphone under various light conditions, rating its readability, discomfort glare, and screen comfort across multiple luminance levels. The results demonstrated that all three factors affect visual perception. Especially, the illuminance on the screen had the most powerful effect on readability, while the factors interacted, offsetting each other's effects. In addition, we confirmed that such effects depend on the observer’s light-dark adaptation state. These findings indicate that visual perception of mobile displays can be determined not by individual factors, but by their complex combination.

The human visual system is capable of adapting across a very wide dynamic range of luminance levels; values up to 14 log units have been reported. However, when the bright and dark areas of a scene are presented simultaneously to an observer, the bright stimulus produces significant glare in the visual system and prevents full adaptation to the dark areas, impairing the visual capability to discriminate details in the dark areas and limiting simultaneous dynamic range. Therefore, this simultaneous dynamic range will be much smaller, due to such impairment, than the successive dynamic range measurement across various levels of steady-state adaptation. Previous indirect derivations of simultaneous dynamic range have suggested between 2 and 3.5 log units. Most recently, Kunkel and Reinhard reported a value of 3.7 log units as an estimation of simultaneous dynamic range, but it was not measured directly. In this study, simultaneous dynamic range was measured directly through a psychophysical experiment. It was found that the simultaneous dynamic range is a bright-stimulus-luminance dependent value. A maximum simultaneous dynamic range was found to be approximately 3.3 log units. Based on the experimental data, a descriptive log-linear model and a nonlinear model were proposed to predict the simultaneous dynamic range as a function of stimulus size with bright-stimulus luminance-level dependent parameters. Furthermore, the effect of spatial frequency in the adapting pattern on the simultaneous dynamic range was explored. A log parabola function, representing a traditional Contrast Sensitivity Function (CSF), fitted the simultaneous dynamic range data well.

LED flicker artefacts, caused by unsynchronized irradiation from a pulse-width modulated LED light source captured by a digital camera sensor with discrete exposure times, place new requirements for both visual and machine vision systems. While latter need to capture relevant information from the light source only in a limited number of frames (e.g. a flickering traffic light), human vision is sensitive to illumination modulation in viewing applications, e.g. digital mirror replacement systems. In order to quantify flicker in viewing applications with KPIs related to human vision, we present a novel approach and results of a psychophysics study on the effect of LED flicker artefacts. Diverse real-world driving sequences have been captured with both mirror replacement cameras and a front viewing camera and potential flicker light sources have been masked manually. Synthetic flicker with adjustable parameters is then overlaid on these areas and the flickering sequences are presented to test persons in a driving environment. Feedback from the testers on flicker perception in different viewing areas, sizes and frequencies are collected and evaluated.