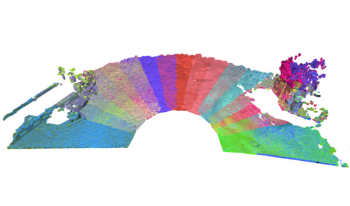

Accurate registration of subsea Light Detection and Ranging (LiDAR) point clouds is critical for offshore metrology, where millimeter-level errors can significantly impact operational cost and risk. This study evaluates automated registration methods for static scan positions acquired by Kraken Robotics systems. Two approaches were implemented in Open3D: a hierarchical tree-based Iterative Closest Point (ICP) method and a pose-graph multiway registration framework. The methods were tested on multiple real subsea datasets containing 19–33 high-density scans per subsea scene and on synthetic datasets generated with Digital Imaging and Remote Sensing Image Generation (DIRSIG) to enable ground-truth evaluation. Results show that multiway registration provides improved global consistency, lower adjacent-scan Root Mean Square Error RMSE, and reduced processing time compared to tree-based ICP. Ground-truth analysis demonstrated sub–sampling-level performance, corresponding to an expected 1–4 mm alignment accuracy for real datasets. Global coarse registration provided no measurable benefit for well-initialized static surveys. The final analysis demonstrates that multiway registration enables accurate, efficient, and fully automated subsea LiDAR alignment, reducing manual effort and improving metrology reliability.

Recently, volumetric video based communications have gained a lot of attention, especially due to the emergence of devices that can capture scenes with 3D spatial information and display mixed reality environments. Nevertheless, capturing the world in 3D is not an easy task, with capture systems being usually composed by arrays of image sensors, which sometimes are paired with depth sensors. Unfortunately, these arrays are not easy to assembly and calibrate by non-specialists, making their use in volumetric video applications a challenge. Additionally, the cost of these systems is still high, which limits their popularity in mainstream communication applications. This work proposes a system that provides a way to reconstruct the head of a human speaker from single view frames captured using a single RGB-D camera (e.g. Microsoft?s Kinect 2 device). The proposed system generates volumetric video frames with a minimum number of occluded and missing areas. To achieve a good quality, the system prioritizes the data corresponding to the participants? face, therefore preserving important information from speakers facial expressions. Our ultimate goal is to design an inexpensive system that can be used in volumetric video telepresence applications and even on volumetric video talk-shows broadcasting applications.