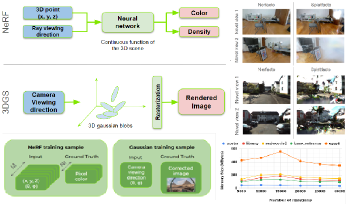

This paper presents a comparative study of Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS) models within the context of automotive and edge applications. Both models demonstrate potential for novel view synthesis but encounter challenges related to real-time rendering, memory limitations, and adapting to changing scenes. We assess their performance across key metrics, including rendering rate, training time, memory usage, image quality for novel viewpoints, and compatibility with fisheye data. While neither model fully meets all automotive requirements, this study identifies the gaps that need to be addressed for each model to achieve broader applicability in these environments.

Neural Radiance Fields (NeRF) have attracted particular attention due to their exceptional capability in virtual view generation from a sparse set of input images. However, their scope is constrained by the substantial amount of images required for training. This work introduces a data augmentation methodology to train NeRF using external depth information. The approach entails generating new virtual images at different positions through the utilization of MPEG's reference view synthesizer (RVS) to augment the training image pool for NeRF. Results demonstrate a substantial enhancement in the output quality when employing the generated views in comparison to a scenario where they are omitted.