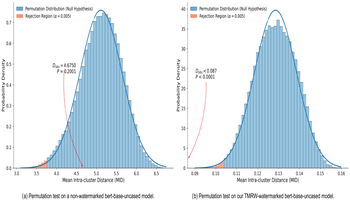

Protecting the intellectual property of natural language encoders faces a critical challenge: hidden watermarks are easy to be erased when models are fine-tuned to adapt to downstream applications, known as “task migration”. To deal with this problem, we introduce a Task Migration Resistant Watermarking (TMRW) framework to strengthen the watermark robustness against task migration. The proposed method uses a dual-objective fine-tuning strategy. During the process of watermark embedding, a specifically designed watermark loss function is introduced to compel the encoder to map a set of trigger inputs into a compact cluster in the embedding space. To counteract the potential performance degradation introduced by this process, an augmented contrastive loss is simultaneously optimized to preserve the encoder’s general semantic representation abilities. This dual-objective strategy is further enhanced by a novel trigger corpus crafting method that ensures the watermark’s stealthiness. Experimental results show that the proposed method enables the embedding of a robust watermark that significantly outperforms existing techniques in resisting erasure from task migration. This work well deals with the challenge of encoder watermark’s durability against task migration, which provides a novel and practical framework for intellectual property protection in natural language processing systems.

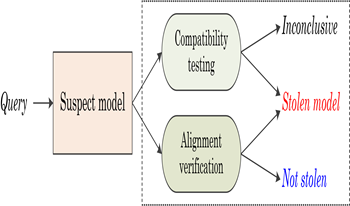

Recent advances confirm that large language models (LLMs) can achieve state-of-the-art performance across various tasks. However, due to the resource-intensive nature of training LLMs from scratch, it is urgent and crucial to protect the intellectual property of LLMs against infringement. This has motivated the authors in this paper to propose a novel black-box fingerprinting technique for LLMs. We firstly demonstrate that the outputs of LLMs span a unique vector space associated with each model. We model the problem of fingerprint authentication as the task of evaluating the similarity between the space of the victim model and the space of the suspect model. To tackle with this problem, we introduce two solutions: the first determines whether suspect outputs lie within the victim’s subspace, enabling fast infringement detection; the second reconstructs a joint subspace to detect models modified via parameter-efficient fine-tuning (PEFT). Experiments indicate that the proposed method achieves superior performance in fingerprint verification and robustness against the PEFT attacks. This work reveals inherent characteristics of LLMs and provides a promising solution for protecting LLMs, ensuring efficiency, generality and practicality.