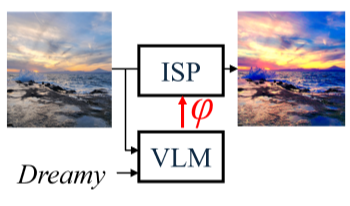

We propose a method for tuning the parameters of a color adjustment Image Signal Processor (ISP) algorithmic “block” using language prompts. This enables the user to impart a particular visual style to the ISP-processed image simply by describing it through a text prompt. To do this, we first implement the ISP block in a differentiable manner. Then, we define an objective function using an off-the-shelf, pretrained vision-language-model (VLM) such that the objective is minimized when the ISP-processed-image is most visually similar to the input language prompt. Finally, we optimize the ISP parameters using gradient descent. Experimental results demonstrate tuning of ISP parameters with different language prompts, and compare the performance of different pretrained VLMs and optimization strategies.

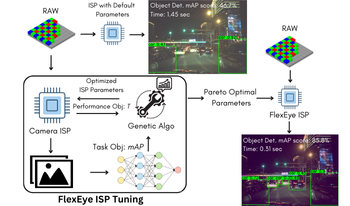

As AI becomes more prevalent, edge devices face challenges due to limited resources and the high demands of deep learning (DL) applications. In such cases, quality scalability can offer significant benefits by adjusting computational load based on available resources. Traditional Image-Signal-Processor (ISP) tuning methods prioritize maximizing intelligence performance, such as classification accuracy, while neglecting critical system constraints like latency and power dissipation. To address this gap, we introduce FlexEye, an application-specific, quality-scalable ISP tuning framework that leverages ISP parameters as a control knob for quality of service (QoS), enabling trade-off between quality and performance. Experimental results demonstrate up to 6% improvement in Object Detection accuracy and a 22.5% reduction in ISP latency compared to state of the art. In addition, we also evaluate Instance Segmentation task, where 1.2% accuracy improvement is attained with a 73% latency reduction.