Face swapping, or deepfake generation, remains a challenging task that requires balancing identity preservation, attribute consistency, and photorealistic realism. We propose a novel training-free, three-stage face swapping framework that improves realism by explicitly aligning illumination and skin appearance prior to diffusion-based synthesis. Our approach refines photometric consistency and skin tone while preserving facial structure and integrates seamlessly with an off-the-shelf diffusion face swapping model. Experiments on the CelebAMask-HQ dataset demonstrate significant improvements in both visual realism and attribute preservation, achieving an FID score of 7.16 compared to the baseline. The proposed method provides an efficient and robust solution for realistic face swapping under varying illumination and appearance conditions without additional model training.

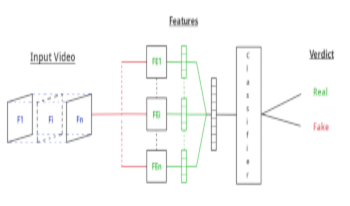

The impressive rise of Deep Learning and, more specifically, the discovery of generative adversarial networks has revolutionised the world of Deepfake. The forgeries are becoming more and more realistic, and consequently harder to detect. Attesting whether a video content is authentic is increasingly sensitive. Furthermore, free access to forgery technologies is dramatically increasing and very worrying. Numerous methods have been proposed to detect these deepfakes and it is difficult to know which detection methods are still accurate regarding the recent advances. Therefore, an approach for face swapping detection in videos, based on residual signal analysis is presented in this paper.